If you've been following Signal articles for some time, you've probably came across one or more mentions of Giorgio Sancristoforo—a software-developer, and an artist with a massive creative output. During the last year alone, Giorgio released four amazing software instruments ranging from a psychedelic tape studio to a solar system-driven synthesizer and virtual MIDI controller. Now he is back with an ambitious one-of-a-kind synthesizer called Radiotone—a perfect fit for the first article for our new series "Electric + Eclectic".

While Radiotone isn't something available for purchase, we think that it's an interesting place to turn for inspiration. We decided to talk with Sancristoforo to understand how it works, and to pick his brain about sonification—the process of converting data into sound. While Sancristoforo's approach is but one of many ways to approach sonification for musical purposes, his thoughts are an excellent springboard for diving into your own sonic experiments.

So What is a Radiotone?

Radiotone is a manifestation of Sancristoforo's long-standing interest in radiation, and its creation is directly influenced by the discoveries of British chemist William Crookes and German physicist Wilhelm Röntgen, specifically in its implementation of vacuum tubes and X-Rays.

In essence, Radiotone is made up of four elements: high voltage generation, X-Ray generation, X-Ray detection, and finally sound synthesis. Each part is realized through a very specific process thoroughly described on the artist's website, and together they merge to form a novel and expressive musical apparatus.

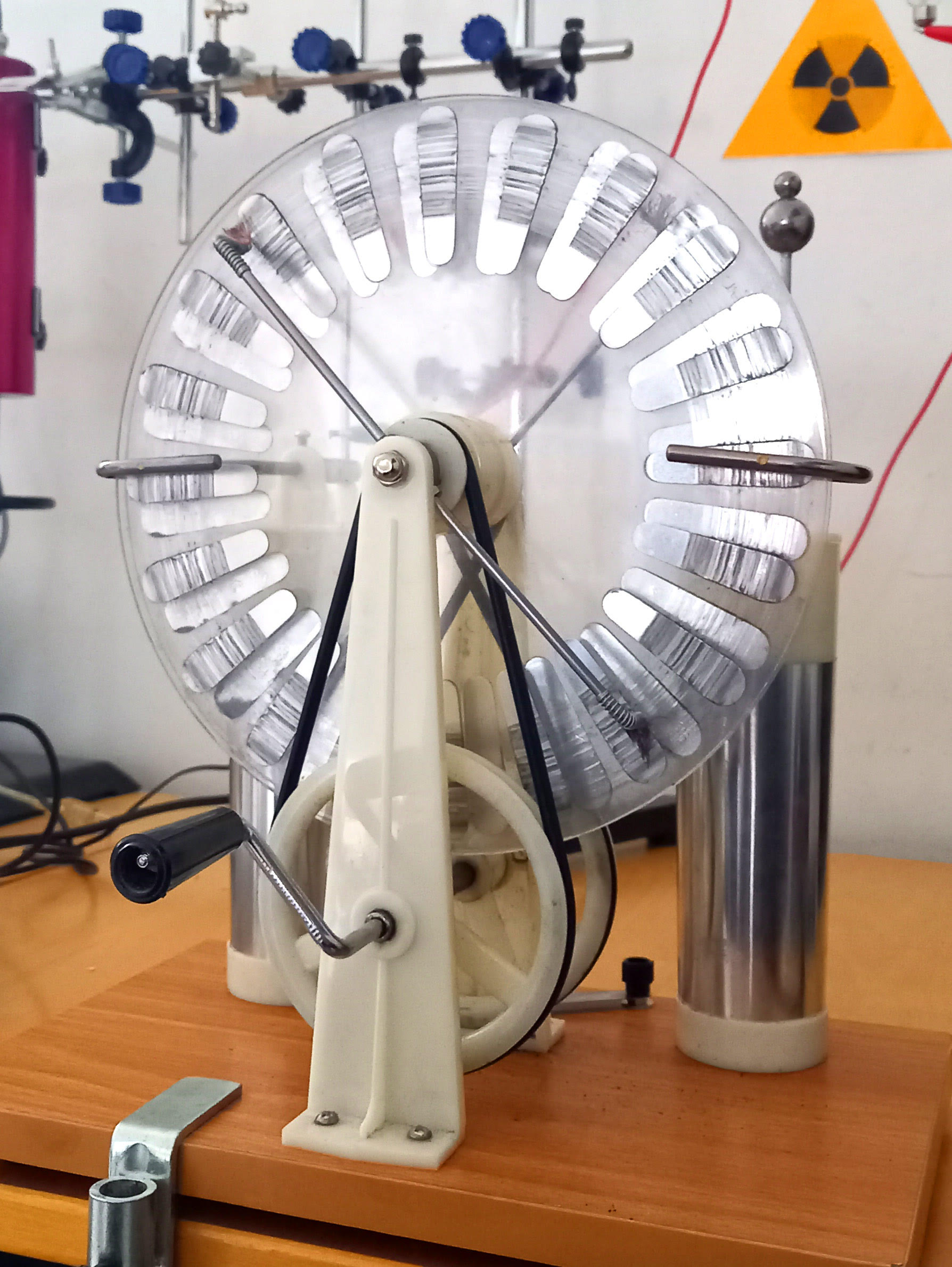

The high-voltage section for Radiotone is generated by means of a Wimshurst machine—an electrostatic generator invented between 1880 and 1883 by British inventor James Wimshurst. However, instead of generating an electrical spark which is a common outcome in general Wimshurst machine experiments, Giorgio connected the output terminals to the Crookes tube—a more than a century-old experimental discharge tube with partial vacuum invented, as you might have guessed, by William Crookes. Inside the tube, the electrical field of the aluminium atoms causes a deceleration of charged particles, and as a result X-Rays are generated.

Crookes' Tube

Crookes' Tube

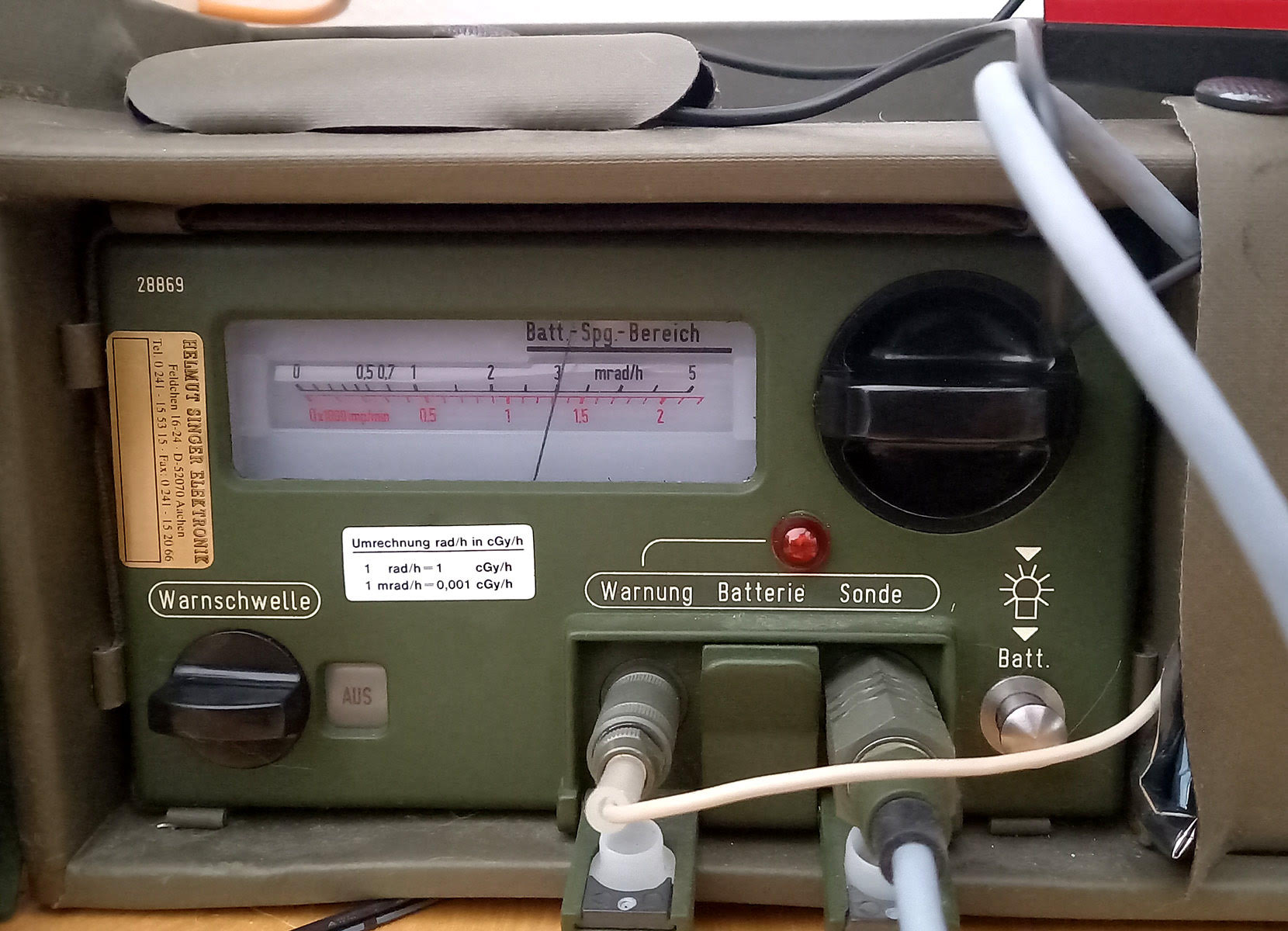

The X-Rays are detected using a high-precision military-grade Geiger counter, the Frieseke Hoepfner SV500. The clicks produced by the Geiger counter are then picked up by a coil microphone, and passed to the powerful embedded computing platform Bela to be used for sonification. The synthesis component is a polyphonic FM synthesizer driven by the artist's DNA, programmed in Pure Data. It is a variation on the same algorithm that Giorgio used in his Thannhäuser Gate installation. The clicks from the Geiger counter then perform two functions in the synthesis engine: triggering the sound, and mutating it over time. Finally, the sound is passed through the excellent Microcosm pedal from Hologram Devices for general sweetening.

Of course, the description above is very general, and for more detailed information we strongly recommend checking out Sancristoforo's website. But this project raises some important questions about using data as a source for music—and we decided to ask him some questions ourselves.

The Art of Sonification

For those unfamiliar, sonification is an aural sister of the much wider-spread practice of data visualization. Sonification often manifests as an artistic practice that can be used to represent large, even inconceivable amounts of any data as sound and/or music—often for the purpose of helping us to wrap our minds around otherwise undetectable patterns and structures in the data. As it happens, a lot of Giorgio Sancristoforo's work revolves around this field in one way or another (for example, the Data Player plugin in Gleetchlab X is a fantastic way to create sound from any file on your computer).

While we could speculate on the subject here ourselves, it seems more appropriate to hear some thoughts directly from the artist, who has been exploring the topic extensively for several years.

Eldar Tagi: Why do you think sonification is an important way to interact with data and/or environment?

Giorgio Sancristoforo: I think this is the most important question I've ever answered in an interview. The question is really important because it offers the possibility to understand the more intimate nature of my work.

I consider myself a person able to shape the sound in any way I want. I have really spent many years trying to get total control over the sound through all available means and in particular sound synthesis and programming languages (but also as a performing musician of course). Having reached this awareness, I began to apply sound to physical phenomena in real time. And the reason is really very simple: I want to listen to the world.

I'm not talking about soundscapes in the classical sense, but in a Pythagorean meaning. Like all musicians I have spent decades developing a sensitivity to sounds, consequently sound for me is a cognitive phenomenon in a very broad sense.

The sonification of the data allows me to use the practice of listening as a way to know phenomena in and around us, which are foreign to the world of music and acoustics. Sound is my instrument of knowledge of the world.

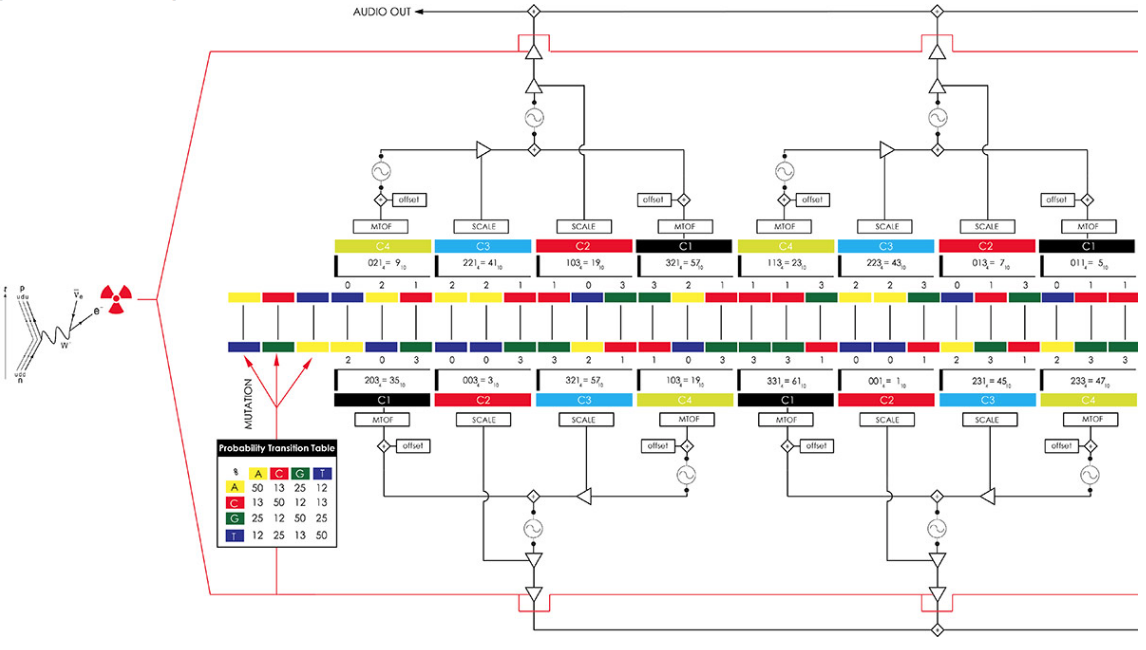

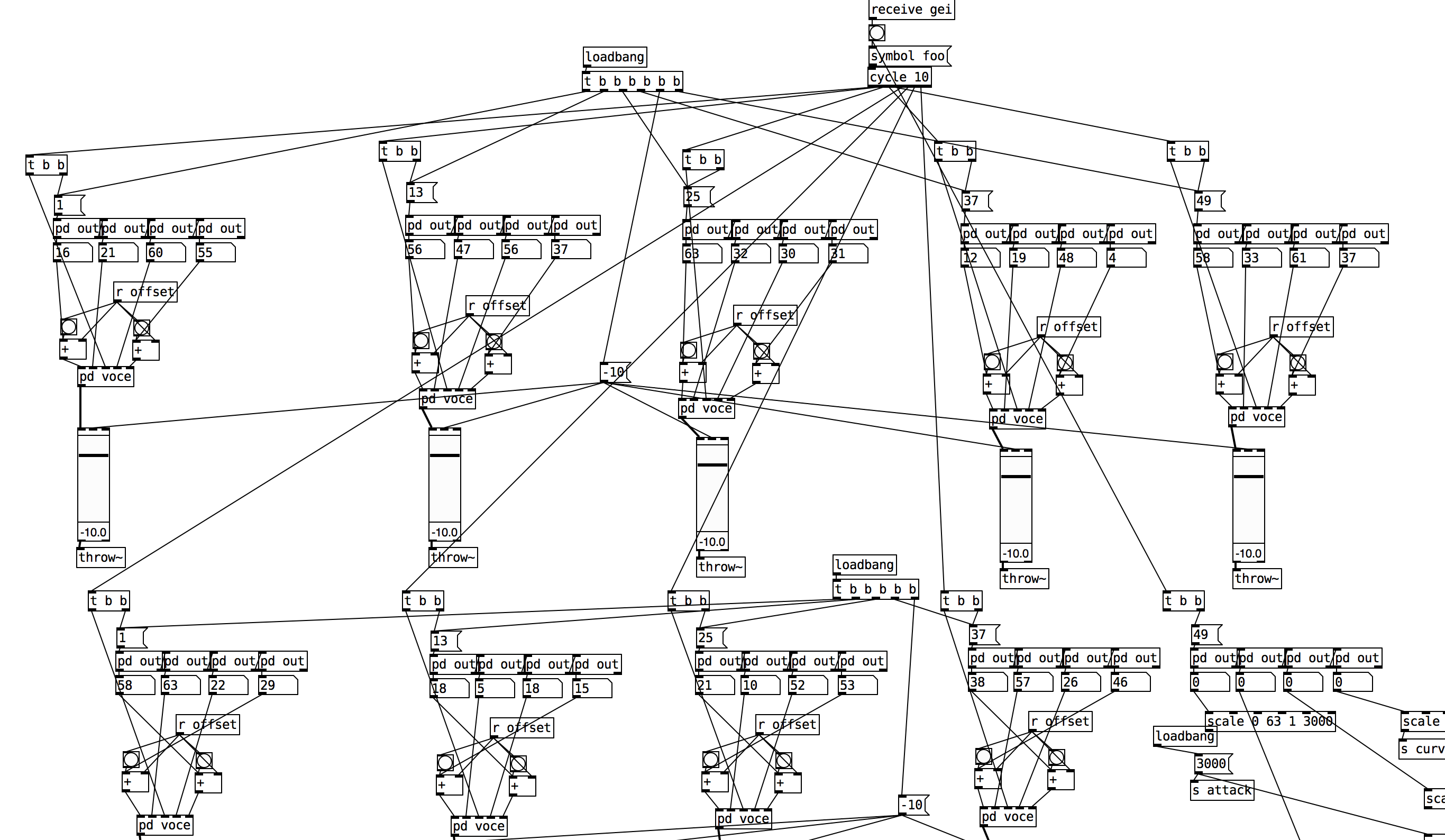

An excerpt of the PD synthesis patch for Radiotone

An excerpt of the PD synthesis patch for Radiotone

ET: How do you make musical decisions when sonifying things like radiation or genome? Does your emotional response to what’s being sonified play an important role in musical decisions?

GS: Emotions emerge at a later stage, when I become a listener and a spectator, when everything is over and I can observe what happens. When the work is complete and I can devote myself to contemplation, then I feel emotions. The musical decisions I make during creation cannot be emotionally driven in any way because otherwise I would be deceiving myself.

You may have noticed that I always use very simple sounds. Sinusoids and noises. In fact, I don't use anything else. These are pure, mathematical tools. If I have to translate the pattern of energies emitted by a radioactive decay (gamma rays), I use a precise acoustic analogy using very simple waves (pure tones) at different frequencies. This is not only scientifically valid, but it allows the listener to truly perceive that physical phenomenon that I want to present, without it being deceived by aesthetic choices that would lose that sense of coherence I seek between the physical phenomenon and its translation into sounds.

Some artists transform sounds derived from a scientific experiment and add layers and layers of new sounds. These are musical and aesthetic choices that have nothing to do with science. It's a respectable method of course, but it's not my way of working.

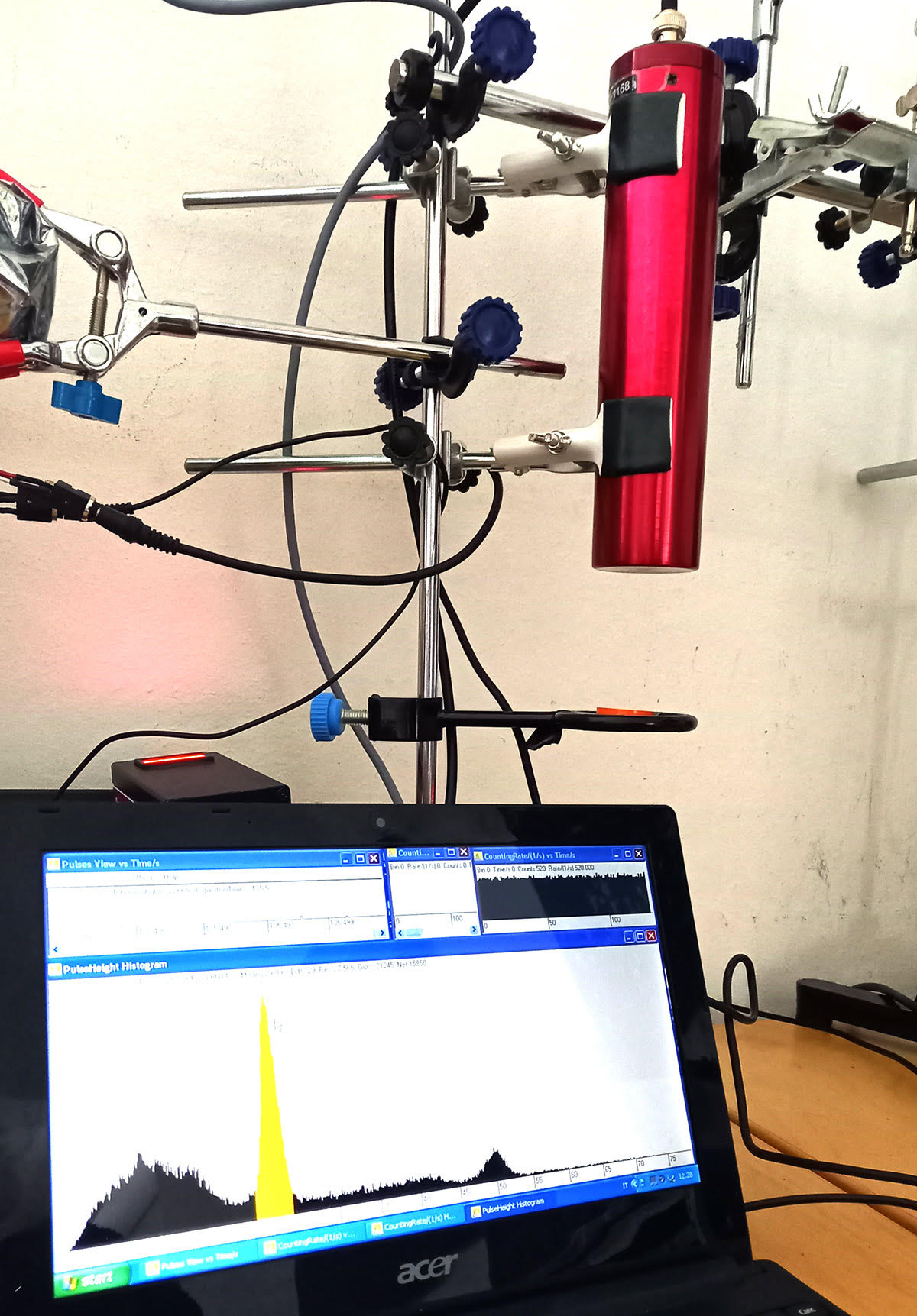

The latest addition to the Radiotone is a gamma spectroscope with a sodium scintillator (the red tube). The highlighted peak show the annihilation of positrons (antimatter) emitted by a Na-22 radioactive source.

The latest addition to the Radiotone is a gamma spectroscope with a sodium scintillator (the red tube). The highlighted peak show the annihilation of positrons (antimatter) emitted by a Na-22 radioactive source.

Let's talk about the genome for example. DNA is a code, very similar to computer code. Instead of 1 and 0 we have 4 nucleotides, so it is not a binary but a quaternary code. With groups of three nucleotides (called codons) we obtain all 64 amino acids of the genetic code (we share this code with all living things on earth). Long sequences of amino acids form proteins, which we can now imagine as the software product of cells (imagine that human DNA is his operating system). To transform all this into sound, we need to create a system that reads this quaternary code, and organizes the sound into groups of amino acids that form a sort of sound protein. In other words, amino acids become data for the synthesis of sound. A sound is therefore composed of several blocks of amino acids that have specific functions: some program the frequency, others the amplitude, others the intensity of the modulation. Obviously the sounds I use are always sinusoids. They must be simple numbers, simple sounds. These numbers are not fantasies, they are real data. My dogma is never to distort the data.

Another good reason to use pure tones is that in the case of DNA I am interested in mutations. As I have often said, the concept of mutation and transformation is the very essence of life, which is why I am very interested in it. Well, if I want the listener to perceive the mutations of the code, I cannot overwhelm him with beautiful sounds as if I were writing a song. I have to give to the listener the ability to hear some very subtle transformations, and therefore pure tones are ideal because they create timbres that evolve.

If I generated complex timbres from the beginning, the listener would find himself/herself in a sonic magma without the possibility of hearing what is really happening. Of course DNA does not have a sound but what is always essential to create is a consistent system. If I apply my algorithm to your DNA, to the same gene that I am analyzing, we could likely hear some differences given by natural differences (genetic variations) and consequently a different sound.

The SV500-Geiger Counter

The SV500-Geiger Counter

ET: What are your ideas on listening to sonified material? Should the approach be any different than simply listening to sounds?

GS: As I mentioned at the beginning, my purpose is to listen to the world, be it the universe or our biology, the microcosm and the macrocosm. But we are not speaking of mere "sounds" but of sounds organized and therefore subjected to a compositional form. Recently a nuclear engineer from the University of New Mexico kindly sent me the sound of a neutron detector during the startup of their nuclear reactor. At first we only perceive a few clicks, then they become a mass of white noise as the reactor starts the fission chain reactions. The reason I haven't used this material yet is because I'm spending time figuring out how I can use this beautiful sound in an artistic way. I don't want to just throw it on a DAW and put effects on it, I want to turn those clicks into triggers for an instrument that speaks to us in a musical way. I'm not recycling a sound (or data), I'm trying to understand it.

One thing I avoid is "using" sounds and organizing them to my liking. This would betray the science and scientists who kindly lent themselves to my experiments. What I want to do is to let physical phenomena organize themselves. In other words, I always have a pure generative approach. A machine detects cosmic rays, a machine then transforms them into sounds. What creates the composition is nature. If there are many nuclear reactions in the upper atmosphere, the sound accelerates and increases in intensity. I want the phenomenon and not my aesthetics to create a discourse with the listener. I try to be transparent as much as possible. Of course I have to make some decisions, so I don't end up in a John Cage paradox. But the important thing is to maintain consistency with science, letting nature create the music (and I stress music, not just sounds).

I repeat once again that this is a Pythagorean approach. The ancient Greeks believed that the universe was a kind of immense musical composition, ordered according to laws that could be deciphered through mathematics. Through mathematical and technological tools we can listen to this “musica universalis". As you know, this is also the thought of Xenakis that I feel very close to me.

Tools For Sonification

Despite the fascinating nature of Giorgio Sancristoforo's experiments, by no means we would advise you to try them at home, as working with radiation requires a very specific set of skills and knowledge. However, if this article inspires you to dive deeper into the realm of sonification there are some tools that we can recommend to start with, and as our world is full of wonders there is no shortage of things to be explored in this manner.

We all know that computers are great at processing huge amounts of data, so it only makes sense to start our list of recommendations with software like Max/MSP or Pure Data. Both are equally valid solutions, although it has to be noted that being a commercial software, Max offers a much better support and all-in-all smoother interface to work with. The kinds of data that you can sonify with these software is seemingly endless, and works well with things pulled directly from the world wide web and external world by means of sensors and microcontrollers.

If programming doesn't resonate with you, there are many ways to explore this field more intuitively with dedicated hardware. For example, Soma Laborotory's Ether is a very sensitive pocket-sized battery-powered device that picks up electro-magnetic fields and high-frequency radiation. You can simply connect headphones to it, and point it at things around you. Add a field recorder in the mix to capture the amazing world of hidden noises around us.

Koma Elektronik's Field Kit is also a great device for sonification as it features dedicated analog and digital sensor interfaces. The beauty of this is that you can use a variety of sensors with it, turning things like air humidity, temperature, amount of light, distance between objects, skin moisture and other data into control voltage and gate/trigger signals.

There are a few modules in the Eurorack format that would also be very helpful and fun in your quest for sonifying the environment. Instruo's Scion allows you to accept plants, pets, and other organic matter as your band members. The module translates biometric data into control voltage signals, and it has a few interesting tricks up its sleeve to make the output very usable in a musical context.

For those who want to look more inward, Soundmachines offer the Brain Interface in both modular and standalone form. The unit is designed to pair with the NeuroSky MindWave EEG headset, and it converts eight brainwave frequency bands into control voltage signals and MIDI data. So instead of playing music, you can just be and the music will follow.

Sancristoforo's Radiotone certainly is a fascinating instrument, and we hope that it inspired you to find your own way to listen to and understand the world around us. In a culture that is predominantly visual, this project is a great reminder of how powerful sound and music can be in the quest of discovering meaning in what often seems to be chaos.