Polyphony. In the world of self-contained synthesizers, this concept has become a fairly standard talking point—after all, whenever you buy a new instrument, it stands to reason that you'd want to know how many notes it can play at one time. Is it polyphonic, meaning that it can play several notes at one time? Monophonic, meaning that it can play only one note at a time? Or perhaps something else entirely?

Funny enough, before electronic instruments, this concept didn't really exist...at least not in the way that we think of it now. In acoustic instruments, the number of simultaneous notes an instrument can play is essentially entwined with the nature of its physical construction. A piano has 88 internal courses of strings, and therefore could hypothetically play 88 simultaneous notes. A standard guitar has six strings, and therefore can play six simultaneous notes...and a clarinet, for instance, has only a single vibrating column of air—so it can play only one note at a time. We accept these truths as part of the physical nature of the instruments themselves, and (except in extreme scenarios) don't typically try to test these boundaries.

Synthesizers are different, in that the "synthesizer" as such is not a single instrument—it itself is a concept: an electronic instrument that offers the user some ability to intentionally define the instrument's timbre, and often some means of altering that timbre over time. Of course, the number of ways this can be achieved is (perhaps) endless...and as such, it's difficult to make universal generalizations about how synthesizers function. It is equally difficult to define their behavior in terms of music that evolved through the use of older, entirely different instruments.

Of course, getting into details about how synthesizers can work is itself a far-reaching endeavor...but today, I want to focus on one interesting aspect of synthesizer design: different approaches to polyphony. This topic extends well beyond what we can cover in a single article. That said, we'll use this article as a space to define some common terms and concepts surrounding synthesizer polyphony—and in the near future, we'll extend the discussion by presenting some specific ways that polyphony can be approached in modular synthesizers. For now, though, we ask a deceptively simple question: what's the deal with polyphony in synthesizers?

Establishing Terms

Before diving deeply into this topic, it's important to note the significant effort made by Marc Doty to bring light to this topic, and to address the way it has changed over time. Doty (Automatic Gainsay on YouTube) is a synthesizer historian, and has been making educational synthesizer content for years—both privately and for organizations such as the Bob Moog Foundation. Doty's excellent series on The History of Synthesizer Polyphony goes into this topic much more deeply than I can here...so do check it out. You'll probably learn something! Let's start our journey here, though, by establishing definitions for some terms...otherwise, things will get confusing super quickly.

First of all, what does "polyphony" even mean? If you break it apart into its roots, it means something to the effect of "multiple sounds." This is an old term that describes a compositional style of the baroque era in which music was composed of multiple distinct melodic lines...as opposed to other textural styles such as monophony (in which all voices played in unison) and homophony (in which voices performed separate notes, but the same rhythm). This historical musicological term is not where the term "polyphony" comes from regarding synthesizers. In synthesizers, polyphony generally describes how many notes a synthesizer can play simultaneously...though as we go, we'll see that this definition can get a bit muddy.

For the sake of forthcoming discussion, we also need to distinguish between the term "note" and the term "voice." When I say the word "note," I'm talking about a pitch. This has nothing to do with articulation—it's just a pitch, plain and simple. A lot of synthesizers can play multiple notes simultaneously in various ways—but in modern terms, for better or worse, they wouldn't necessarily be labeled as "polyphonic." More on that to come.

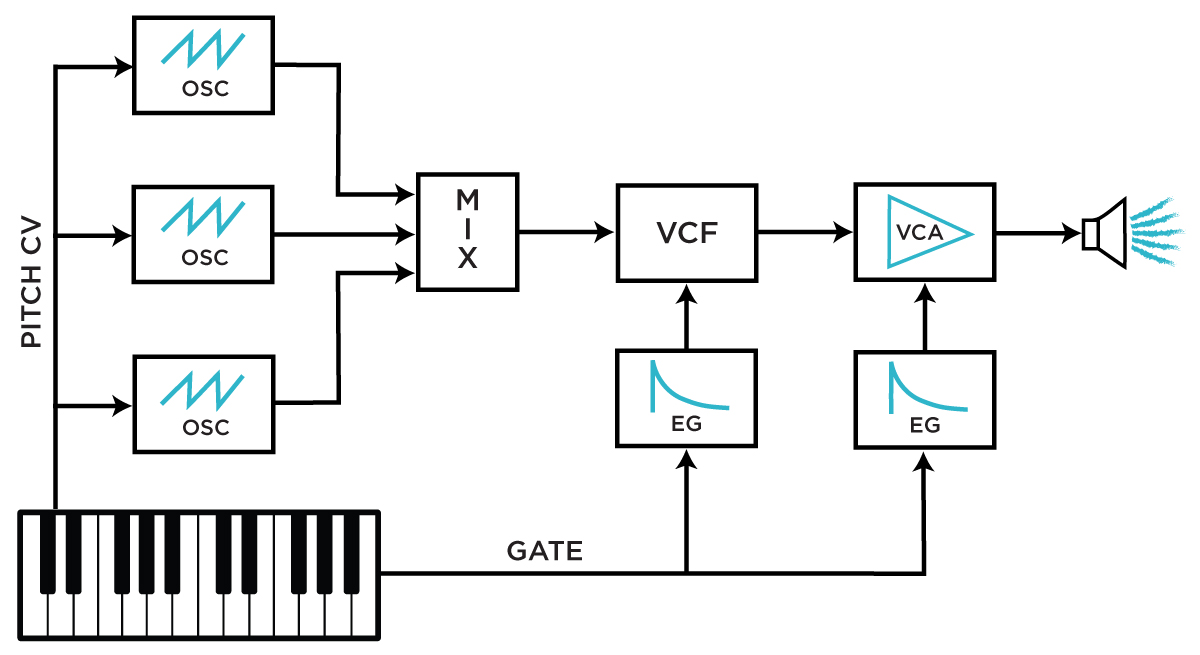

A typical synthesizer voice structure, as in the Moog Minimoog and countless others.

A typical synthesizer voice structure, as in the Moog Minimoog and countless others.

The word "voice," on the other hand, does carry implications about articulation. In a synthesizer, the term "voice" typically refers to an entire sound-making structure—so the conventional synth voice includes the structure that makes sound (usually an oscillator or group of oscillators), as well as the structure that allows for articulation (usually a filter, amplifier, and envelopes). The important thing to note here is that (as we'll see later) the number of notes a synthesizer can play simultaneously is not necessarily the same as the number of full "voices" it contains. The notion of "note count" and the notion of the articulative structure are distinct from one another...though as we'll see, the two are often conflated, and some confusing terminology crops up as a result.

Another point worth noting here—the terminology surrounding synthesizer polyphony is interesting in particular because, for basically the entirety of their existence, synthesizers have existed as commodities. As such, many of the terms that describe their behavior weren't developed by users or people who studied these instruments' history, but instead by their inventors and the marketing departments responsible for selling them. There's nothing wrong with that, of course...but because marketing departments are seldom concerned with upholding historical through-lines and are instead concerned with explaining what makes their product unique, many terms have cropped up across the ages in ways that are perhaps at odds with other terms...and this combined with changes in popular use has even meant that some terms have emerged after decades of disuse meaning something entirely different from what they originally meant.

Confused? That's fine. Let's talk about synthesizers now.

Why Do Synthesizers Have Keyboards Anyway?

So polyphony. It's about how many distinct notes a synthesizer can play at one time. Not every synthesizer is the same in this regard—the number of notes a synthesizer can play depends directly on the resources that comprise its sound structure. In a synthesizer with only one oscillator, for instance, you're not going to develop polyphonic textures. Now, of course, the history of electronic instruments now goes back well over 120 years—but for the sake of our conversation here, let's drop in at the 1960s for some quick thoughts on the early days of synth development.

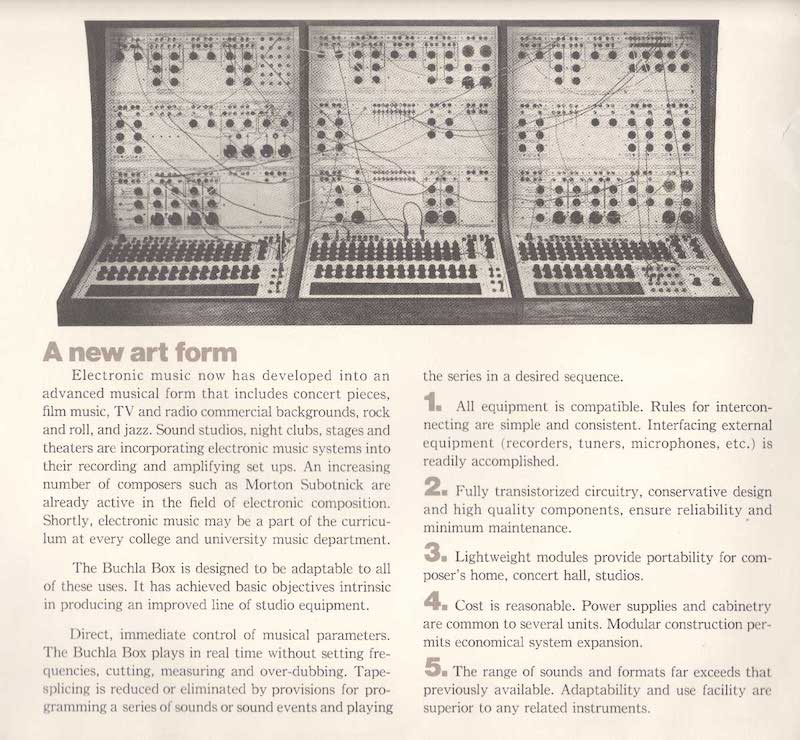

A CBS / Buchla 100 Series system brochure, via Buchla USA

A CBS / Buchla 100 Series system brochure, via Buchla USA

The original synthesizers as we might think of them today—early voltage-controlled modular systems—are especially unique in this regard. When these instruments were first developed, polyphony wasn't necessarily a direct concern in the same way that it is today. When an instrument is made up of an arbitrary arrangement of oscillators, filters, mixers, amplifiers, etc., there are practically countless ways to reorganize those internal components—so pinning down "how many notes" a modular system can play at one time is, well, difficult. The answer changes depending on the patch. An early Buchla 100 system, for instance, might have many oscillators—and each could be controlled separately from one another in such a way that many distinct sounds or pitches could be produced simultaneously if that's what the user wanted. These instruments were about exploring experimental approaches to sound generation and control...and as such, they weren't necessarily concerned with concepts like the "note," harmony, or conventional rhythm. After all, the synthesizer was a completely new instrument: one which produced a new type of music, and which need not have a playing interface that resembled anything familiar.

In the early days of synthesizer development, though, Bob Moog's instruments took a slightly different turn (for more on this topic, I'd strongly recommend checking out the excellent book Analog Days by Trevor Finch and Frank Trocco, which details the early parallel development of Moog and Buchla's instruments). After much deliberation and consultation, Moog elected to include a keyboard as a playing interface for his instrument—which, if only for the sake of marketability, was probably a smart move. However, for the sake of our current conversation, it's also where things start to get weird.

A modern reissue of Moog's classic System 55, complete with keyboard control

A modern reissue of Moog's classic System 55, complete with keyboard control

In a kind of peculiar way, with Buchla's early instruments, what you see is what you get: you see something foreign and unlike prior instruments...and in fact, it was a new instrument that need not be approached in conjunction with any extant musical tradition. With Moog's instruments, though, something else was happening: despite the fact the keyboard could be used in any number of ways (after all, it was just a source of control voltages), it truly did invite users to approach it in a more traditional fashion than did Buchla's instruments. And despite the fact that the keyboard wasn't even necessary to make music with the synthesizer, the instrument began to be seen as a keyboard instrument—and as such, traditional composers and keyboardists tended to be the performers who gravitated toward it. Gradually, Moog and other manufacturers started making smaller and increasingly self-contained synthesizers that packed in a small number of essential synthesis building blocks and included a keyboard as the primary playing interface—the Moog Minimoog being chief among them. After a few short years, the term "synthesizer" for many people implied the presence of a keyboard.

Imagine yourself, though, as a keyboardist in the late 1960s and early 1970s. What are your primary instruments? Well, probably piano, maybe some form of organ, and maybe some form of electromechanical instrument like a Rhodes or Wurlitzer. Aside from a similar user interface, what do these instruments all have in common? Well, in modern terms, they're fully polyphonic: the limit to the number of simultaneous notes is the same as the number of keys. As such, many aspects of your playing style likely hinged around the fact that your instrument could play multiple notes simultaneously. Chords, vamping, counterpoint—it all requires rapid simultaneous access to a large number of notes, and keyboardists develop extensive technique related to the capabilities that polyphonic keyboard instruments provide.

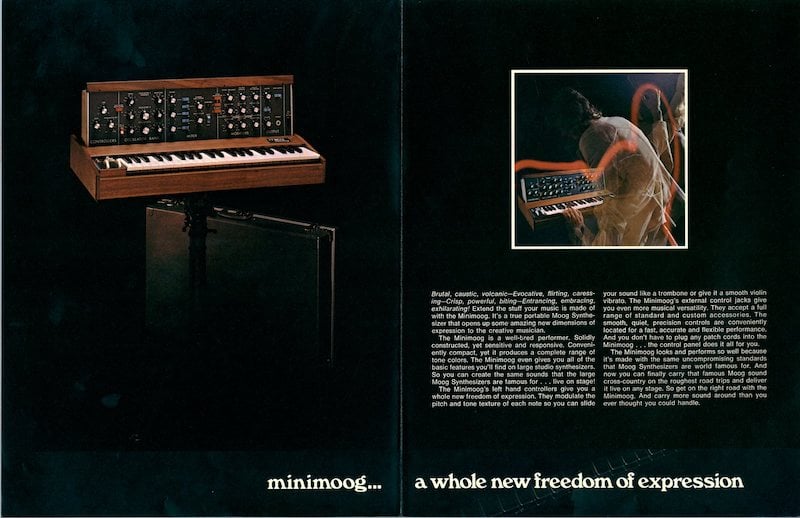

A vintage Minimoog advertisement, via cdm.link

A vintage Minimoog advertisement, via cdm.link

So what do you suppose would happen when you encountered a Minimoog for the first time? Well, you very well might not be very good at playing it. Because the Minimoog only has the internal makings of a single synth voice (a few oscillators all linked to the keyboard, a single filter and amplifier, and two envelopes dedicated to the filter and amp, respectively), it is designed to act as a basically monophonic instrument—and if you go to it expecting to be able to play chords or even to be able to hold keys down when playing new notes, you'll get into some unexpected behavior. There are tons of stories (some perhaps apocryphal) about musicians being confused and frustrated about the fact that their Minimoog couldn't play chords, some even convinced that it was broken. Regardless the authenticity of all of those stories, though, the truth is that many instrumentalists did approach the synthesizer expecting to play it the way they played organ or piano, only to find that they needed to develop another playing technique altogether. (Of course, plenty did, often to excellent and idiosyncratic effect).

The fact is, there are plenty of ways for a synthesizer to be polyphonic—and synthesizers as we broadly think of them have always had the capability of being polyphonic (after all, modular systems offer plenty of ways of creating textures made up of multiple simultaneous notes). But as the synthesizer became more and more widely considered to be a keyboard instrument, alternative approaches to polyphony did not seem satisfactory to keyboard players. The market demand for "polyphonic synthesizers," then, isn't really so much about the nature of the synthesizer so much as it is about the nature of the keyboard. In the words of Marc Doty:

"Polyphony requiring new technology and new techniques doesn't feel like polyphony. It's hard to explain. Polyphony, I think, is inexorably tied to keyboards and traditional music."

I have to agree.

So How Do You Make a Polyphonic Synthesizer?

So, as market demand for synthesizers that could play more than one note simultaneously emerged, synthesizer designers tried out a lot of potential approaches. Given that "the synthesizer" as a concept was fairly new, it wasn't yet clear what combination of raw ingredients (oscillators, filters, etc.) would make for a satisfactory playing experience...and of course, being that the fine folks designing synthesizers were also concerned with minimizing overhead and selling synthesizers, finding a practical balance between user demand and affordability was key. As such, many approaches to polyphony emerged.

So we know that the first self-contained, non-modular keyboard synthesizers were what we call monophonic—meaning in broad strokes that they could only play one note at a time. Interestingly, this wasn't exactly because they only had one oscillator...in fact, the Minimoog contained three, and it was (and is) a fairly common practice to tune the oscillators to different pitches. What makes the Minimoog feel monophonic, though, is that by default, those oscillators' pitches are all directly tied to the keyboard—and the keyboard only produced a single CV signal to send to the oscillators. So even if the oscillators were tuned to different pitches, they would all follow the same melodic contour. Furthermore, these oscillators are mixed together and then sent along to a single filter (with dedicated envelope) and then to a single amplifier (with dedicated envelope). You can think of the filter+amp+envelope combination as an articulative structure, to use Marc Doty's words: a way of imparting articulation for the current sound. Monophonic synths like the Minimoog feature a monophonic playing interface, a single sound generating structure (the oscillators), and a single articulative structure.

So, how do you make a polyphonic synthesizer? The utopian answer might be to copy+paste your Minimoog voice circuitry to match the number of available keys, et voila! You have a polyphonic synthesizer. Of course, in the real world, the question was: how do you make a polyphonic synthesizer that a) is reasonably small, and b) won't bankrupt you. Sadly, our hypothetical copy+paste Minimoog monstrosity, while awesome in my imagination, fits neither of these criteria. So instead, designers looked for alternative methods.

And as such, in the early days of analog keyboard synths, three primary alternative approaches came about: one method which uniquely leveraged monosynths' internal resources, one method that messed with the note:articulative structure ratio, and one method which required diving into the world of digital control.

Approach 1: Duophony

The concept of the duophonic keyboard synth came about in the late 1960s/early 1970s, famously part of Moog's 952 keyboard, ARP's Odyssey, Octave's The CAT, and others. Typically, instruments capable of duophony were primarily monophonic—they contained a set of oscillators which were mixed together and had a single articulative structure. They offered (by flipping a switch or by applying patch cables), though, the ability to produce two note CV signals from the keyboard, which could then be directed to separate oscillators.

This worked by using a combination low-note/high-note priority keyboard. The ARP Odyssey, for instance, has two oscillators. If you play a single note on the keyboard, the same CV is applied to each oscillator, and each oscillator tracks together, as you'd expect! When you play two notes at once, though, a CV produced from the lower key goes to one oscillator and a CV from the higher key goes to the other—allowing you to play two notes at a time. Of course, these oscillators share the same articulative structure; they still get mixed together and share the same filter, amp, and envelopes. More on this later...

Duophony was a simple, succinct, and effective way to quell some of the general thirst surrounding polyphony, and many designers adapted their instruments or built new instruments entirely to take advantage of the concept. Early keyboards for the ARP 2600, for instance, didn't include this feature, but the later model 3620 keyboard did—and of course, duophony was one of the primary selling points for Octave's The CAT. But of course, at the end of the day, two notes are still just two notes, and a single articulative structure is still a single articulative structure...so this novel approach to instrument design was not an exact solution to musicians' demand.

Approach 2: Mess with that Note Count : Articulative Structure Ratio

Another solution was inspired in part by technology from the older Hammond Novachord. The Novachord was, for all intents and purposes, a fully polyphonic instrument in the manner of a piano or organ...one which used tube-based oscillators for sound generation. Now you might be thinking...that's a lot of keys—are there really tube oscillators for each one? Well, no, not exactly.

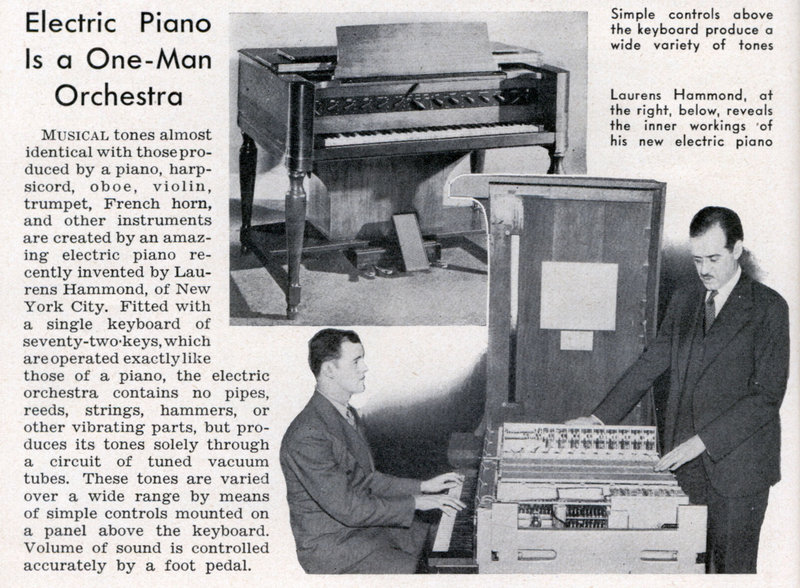

An advertisement for the Hammond Novachord "electric piano," via archive.org

An advertisement for the Hammond Novachord "electric piano," via archive.org

The Novachord uses what we now generally call top octave division to generate all of its notes. In essence, there are twelve full-blown oscillators that produce the pitches of the highest octave of the keyboard. Then, a series of frequency dividers is used to extrapolate all of the lower octaves from there. The keyboard itself in this situation no longer needs to be concerned with communicating information about pitch at all: each key just needs to gate (or unmask) the appropriate divided signal. Problem solved: you're able to generate every note you need for several octaves of a 12-tone scale with only twelve oscillators and a few divider circuits each.

The top octave division concept became the basis for loads of synthesizers in the '70s—it made it such that it was actually fairly easy to generate all the individual pitches you'd need to make it such that every key on the keyboard could be played simultaneously. But that's only part of the problem...so what about the articulative structure?

One of the first attempts to create a commercial synthesizer using this concept was the ill-fated Moog Apollo. The original version of the Apollo (see this video for some info on its storied history) fit this structure exactly: it used top octave division to generate all of its "oscillators" and used the keyboard as a means of gating those oscillators so that they only produced sound when a key was pressed. But of course, a Moog synthesizer wouldn't have been a Moog synthesizer without a Moog filter, right? Well, of course, it wasn't practical to put a Moog ladder filter on each individual key—so the proposed workaround was to apply a single global filter and amplifier to the entire instrument, each with a dedicated envelope: applying a single global articulative structure to an otherwise fully polyphonic instrument. It's a sensible compromise, but it's not without its quirks: the filter could conceivably cut out higher notes as it decays, and depending on your envelope settings, rearticulating a single note on the keyboard could cause all of the other held keys to seem to rearticulate as well.

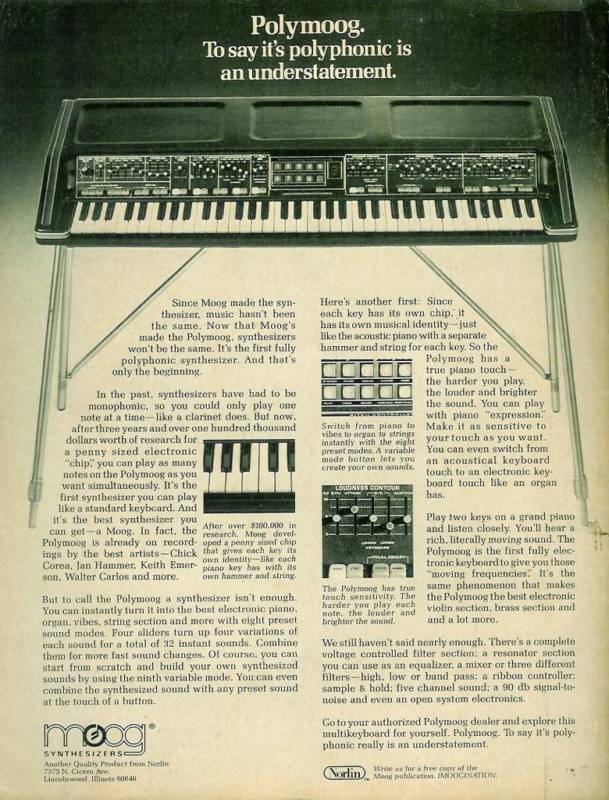

"To say it's polyphonic is an understatement"

"To say it's polyphonic is an understatement"

A quick aside—after some critical feedback, the Apollo was reworked into the Polymoog—for which Moog developed an application-specific integrated circuit called "the Polycom chip," which provided a basic single articulative structure on a chip. With one of these chips per key paired with the top octave division note generation scheme, the Polymoog achieved "full polyphony" with independent articulation per-key...and though each key didn't have a Moog transistor ladder filter, the Polycom chip did have a basic filter and met the criteria for what we'd now call a polyphonic instrument. It did keep the global transistor ladder filter, but had no global VCA post-filter.

Anyway, the structure of top-octave division with individual notes gated by each key combined with a single global articulative structure became quite common. This structure became the predominant way of achieving polyphony in an affordable package for years, and became a go-to means of implementing polyphony in a fascinating group of synthesizers meant to act simultaneously as some combination of a synth, organ, string machine, such as Korg's Trident and Delta, Moog/Realistic's Concertmate MG-1, and (notably) Roland's Paraphonic-505. Roland coined the term "Paraphonic" to describe the instrument's multi-timbral architecture: it offered several separate timbres in parallel which could be blended as desired. However, for better or worse, over time the term "paraphonic" evolved to describe that other aspect of the instrument's architecture: the fact that its many simultaneous tones were mixed and sent through a single articulative structure. We'll come back to paraphony in a moment...as the term's meaning has continued to change even beyond that.

Approach 3: Digital Note Allocation

As you can likely surmise, not having an independent articulative structure for each playable note on the keyboard was (and is) still an odd experience for a player used to the piano, where each note's articulation is fully independent. But as we've said, making a "fully polyphonic" synthesizer where each note is simultaneously accessible and offers individual articulation is tricky—remember, we can't copy+paste the Minimoog dozens of times and stuff it into a reasonably portable/affordable instrument. In the 1970s, many manufacturers began to pursue another method which struck a compromise between an instrument's internal analog resources and the number of distinct notes that could be articulated simultaneously.

The solution involved creating an instrument that could intelligently assess which notes were being played on the keyboard and dynamically assign those key presses to individual synth voices. At that point, the manufacturer would need only to settle on the number of analog voices they wanted in their instrument and decide on how note allocation/priority would be handled.

One of the first great examples of this type of instrument came in the form of Oberheim's multiple voice systems: the Two Voice, Four Voice (pictured above), and Eight Voice. These paired an appropriate number of Oberheim SEM Synthesizer Expander Modules (each a full voice with oscillators, filter, LFO, envelopes, VCA) with a digitally-scanned keyboard (licensed from Dave Rossum of E-mu) which would appropriately allocate new notes to each of the SEMs. Of course, this isn't really "full polyphony" (whatever that means), in that the maximum number of notes you can play simultaneously is limited to the number of voices included in the instrument...but it's a fairly practical compromise, in that you seldom need to play in excess of eight simultaneous notes, for instance, and can usually adapt your playing to effectively make use of whatever number of voices any given instrument is capable of producing. It's certainly more natural than adapting to playing an instrument with higher polyphony, but a single articulative structure.

One of the interesting aspects of these Oberheim instruments was that each SEM still had individually definable timbre: each voice had its own set of tone controls. As such, you could do a range of truly remarkable things—each new keypress could address an entirely new timbre, making for melodies whose apparent timbre cyclically changes note-by-note. However, many musicians used these in such a way that each of the SEMs produced the same sound, side-stepping a potentially quite interesting means of interacting with a polyphonic electronic instrument. After all, wouldn't it be incredible to develop a thoughtful way of playing multiple timbres from a single user interface?

Modern reissue of Sequential's Prophet-5 polyphonic synthesizer

Modern reissue of Sequential's Prophet-5 polyphonic synthesizer

In 1978, one instrument in many ways solidified our concept of what an analog polyphonic synthesizer is: the Sequential Circuits Prophet-5. With prior experience working with then-new microcontroller technology, Dave Smith was able to create an instrument which not only successfully addressed five internal synth voices from a keyboard, but also had full patch memory and a consolidated user interface that allowed you to simultaneously program all five voices to sound the same as one another. This was a remarkable feat, and clearly exactly what many musicians wanted: a synthesizer where each voice had individual articulation, where each voice had a unified sense of timbre, and where you could play polyphonically with a reasonable voice count.

Of course, work continued in the 1980s developing interesting ways of implementing multi-timbral approaches in instruments that used this basic digitally-controlled analog approach, including such remarkable instruments as Oberheim's Xpander and Matrix-12. However, with the introduction of the Yamaha DX-7 in 1983, things shifted significantly—not only because of the groundbreaking changes in sonic possibilities using FM synthesis, but also because this digital instrument didn't have to make compromises about polyphony in the same way as its analog forebears. It was capable of fully articulated 16-note polyphony at a fraction the cost of even the five-voice Prophet-5. For many working musicians, analog synthesizers began to seem like they had too many practical limitations to be worth it. Digital instruments became the norm, polyphony expanded considerably, and many of these older approaches to instrument design seemed obsolete.

Reviewing Terms

So in analog keyboard synths, there are a handful of approaches to polyphony. Let's quickly review some terms we've discussed—we'll discover as we go that some of them are a bit more knotted and foggy than others.

Monophonic instruments contain a single voice—a single pairing of a sound generating structure and an articulative structure. Duophonic instruments traditionally used the same general architecture of monophonic instruments, but provided a way of separately addressing their oscillators' pitches using keyboards that could detect the lowest and highest notes currently being played. In a classic duophonic instrument, the two oscillators are not individually gated and have no independent articulation.

Things get more complex from here. Ordinarily you'd hear that the other options are "polyphonic" and "paraphonic," but what these terms mean is somewhat questionable. "Polyphonic" really should just mean that the synthesizer can play multiple notes at the same time—in which case, duophony and paraphony are types of polyphony. Furthermore, "Paraphonic" originally meant that a synthesizer had multiple simultaneous layered timbres, but eventually its popular meaning changed to refer to the top-octave-division, individually-gated-pitches, single-articulative-structure synthesizers.

But what do these words mean in popular usage? Well, typically the term polyphonic is now used to mean "this synthesizer can play multiple voices with independent per-note articulation." A synthesizer could be four-voice polyphonic, eight-voice polyphonic, and so forth: the term itself doesn't indicate anything about the maximum number of simultaneous notes the instrument can play. An interesting note I picked up from the aforementioned Marc Doty video series: Bob Moog originally proposed that this sort of architecture be referred to as "multiphonic," which hasn't quite caught on as a term.

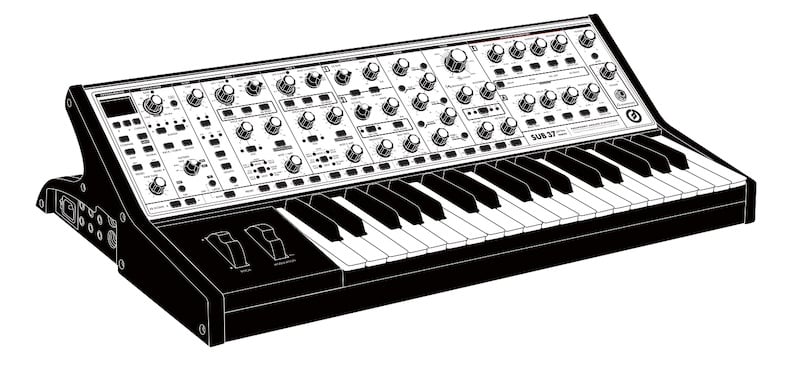

Moog's questionably "paraphonic" Sub 37

Moog's questionably "paraphonic" Sub 37

These days, the word paraphonic gets used in a lot of different ways—and this is partly where marketing departments can occasionally make a mess of standard terminology. As we know, the term "paraphonic" had already shifted meaning prior to the 2010s—but with the release of a few key instruments in the last decade, it has gone even further from its original meaning. The Moog Sub 37 in particular upon release boasted that it was a "two-note paraphonic" synthesizer...the use of the term in this context was intended to make it clear to consumers that you could play two pitches at once (using the two primary oscillators), but that those notes shared a single articulative structure. Could they have just called that duophonic? Yes, it entirely fits the definition—but it seems Moog's marketing team was concerned that consumers might take "duophonic" to mean something more like "two-voice polyphonic." An understandable concern, but it further complicates the definition of "paraphonic"—and in fact, the popular understanding of the term is now much more broad than it ever has been.

So the simplest, most universally true thing about synthesizers currently marketed as being "paraphonic" is that they are capable of playing more than one simultaneous note, and that all sound sources are summed together and sent through a single articulative structure. These days, most "paraphonic" instruments do not rely on top-octave divison and instead use a digital scanning/allocation scheme. Furthermore, the individual notes may or may not be individually gated prior to the articulation stage depending on the instrument (and some such instruments, such as Moog's Matriarch, allow the user to choose whether the oscillators are gated or not).

Arturia's Microfreak, bending the definition of "paraphonic"

Arturia's Microfreak, bending the definition of "paraphonic"

Further complicating things, some "paraphonic" instruments such as the Sequential Pro-2, Pro-3, Microfreak, and others offer multiple ways of re-routing the internal oscillators and/or imparting variable dynamic articulation on each oscillator prior to the global articulation structure. (Doty proposes the word "variophonic" for instruments which offer this sort of internal customization of the internal signal flow and relationship of the "note count" to articulative structures). So at this point, the term "paraphonic" is actually quite vague—and if you see an instrument described as being paraphonic, it still leaves some questions unanswered. How many notes can it play at once? Can these notes be individually articulated prior to the global articulation structure? If so, are they gated (on/off) or can the dynamic contour be arbitrarily defined? Is there a single global articulative structure, or multiple? These are things you'll need to consider if you're interested in an instrument that is labeled as being paraphonic.

Whoa. So let's regroup: in this article, I've endeavored to give a quick overview of the matter of polyphony in synthesizers. Hopefully you're walking away with a greater understanding of the nuance in creating definitions for polyphonic synthesizer structures, and slightly more aware of all of the ways that a keyboard synthesizer can be polyphonic.

In forthcoming articles, we'll tackle the concept of polyphony from the perspective of a modular synthesist rather than a keyboardist, which will lead us to discover tons of options for creating polyphonic textures that we haven't yet addressed. We'll discuss polyphonic approaches in purely analog modular synths, but we'll also discuss several contemporary approaches to polyphony gaining traction in the world of Eurorack synthesizers, where digital control and digital sound generation are increasingly common.

Of course, you might also walk away from this article sort of confused about the whole topic. If that's how you're feeling at the moment, don't worry—if nothing else, there are a few useful takeaways:

- 1) Synthesizers are nearly all different from one another. There's no one way to build a synth, and there are always plenty of ways to approach a single musical goal. Some ways will be more or less universally useful than others.

- 2) Words...are weird. They can change, and occasionally that makes it difficult to accurately communicate ideas.

- 3) You don't need to sweat it. For most people, words don't need to be involved in the process of music-making—and you can still have a blast without giving all this too much thought.