The growing popularity of synthesizers has led to increased interest in regards to what these mesmerizing machines are and how they work. Learning synthesis can seem daunting; with its extensive abstract vocabulary, its background in science and academia, and its constantly evolving shapes and methods of interaction, it can easily overwhelm the newcomer.

The cable jungle usually associated with modular synthesizers doesn’t really help to combat this apprehension, but ironically, modular synthesizers may be the best place to start learning. Any complex system is as complex as the sum of its parts, hence the key to understanding it lays in breaking the whole thing down to the core elements.

Gaining a thorough knowledge of the different types of modules and their functionality will serve as a solid foundation. The goal of this article series is to deconstruct an arbitrary synthesizer to its basic modules. We’ll start by addressing the general concepts and key actors in this article and zoom in on the details in future episodes.

Divide and conquer

Any synthesizer can generally be subdivided into four macro categories. Generators produce audible signals. Most commonly they come in the form of oscillators, but they also include various audio playback devices, such as samplers, and other noise-emitting non-organic entities. Modulators are circuits that are designed to control and animate parameters of a given synthesizer. These may include clocks, LFOs, envelope functions, sample and holds, random voltage generators, sequencers, and other controller interfaces. Processors are units that pass signals through and alter them in one way or the other, e.g. filters, effects units, waveshapers, wavefolders, equalizers, mixers. Utility is a category that includes things like logic modules, quantizers, attenuators, polarizers, multiples, switches, amplifiers, and more.

Dialects of the Sound Synthesis Language

Any given synthesizer deals mainly with three types of signals: audio, CV, and gate. Audio signals occupy a portion of the spectrum that is within the limits of our threshold of hearing, generously approximating to 17Hz-22Khz. Control voltage (or CV) are signals that live in the infrasonic realm, encompassing frequencies below our hearing range. We use control voltages to automate and manipulate synthesizer parameters. Volts per octave (V/8) and Hertz per volt are special types of input scaling of the control voltages to be used for the adjustments of the pitch parameter.

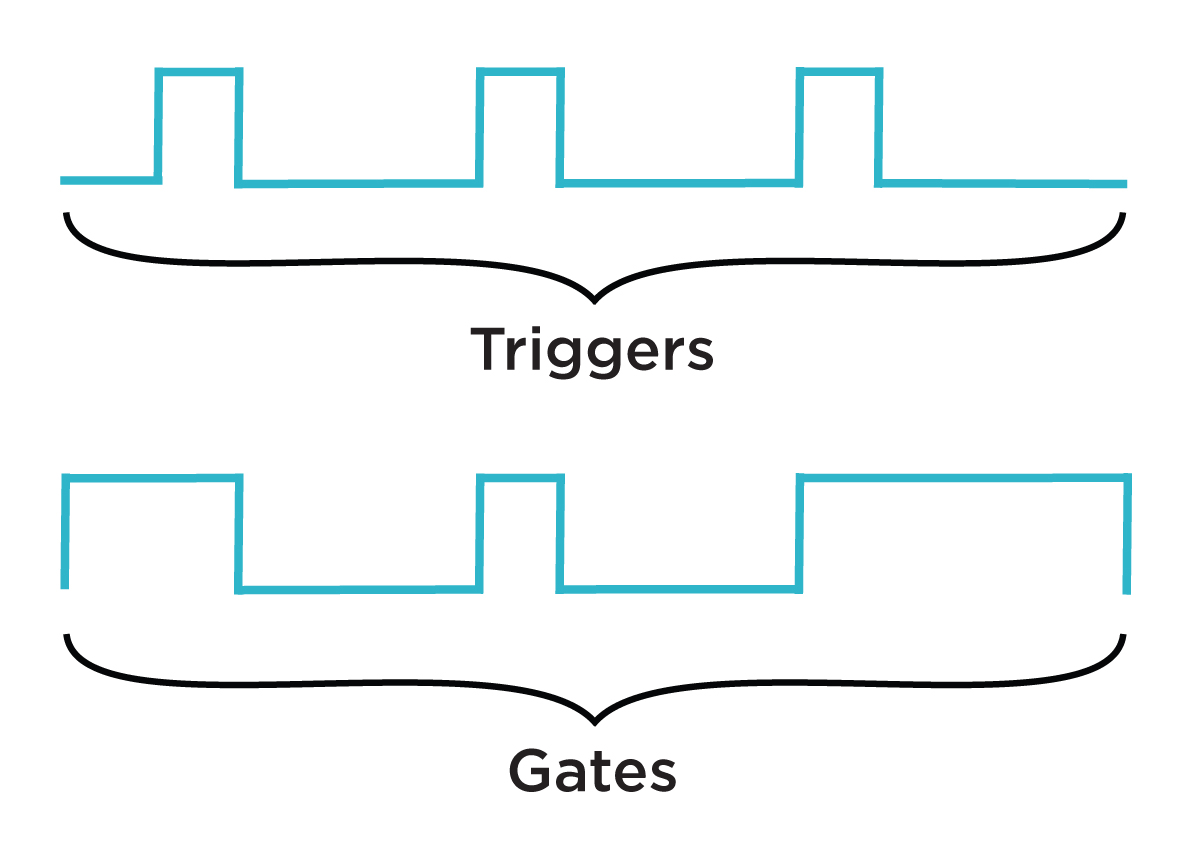

Gates and triggers are often used interchangeably. Both are represented as a pulse, functioning similarly to an on/off switch and are generally used to initiate musical events. Gate differs from a trigger signal explicitly by the width attribute. While triggers are short pulses, gates can have arbitrary user-definable width.

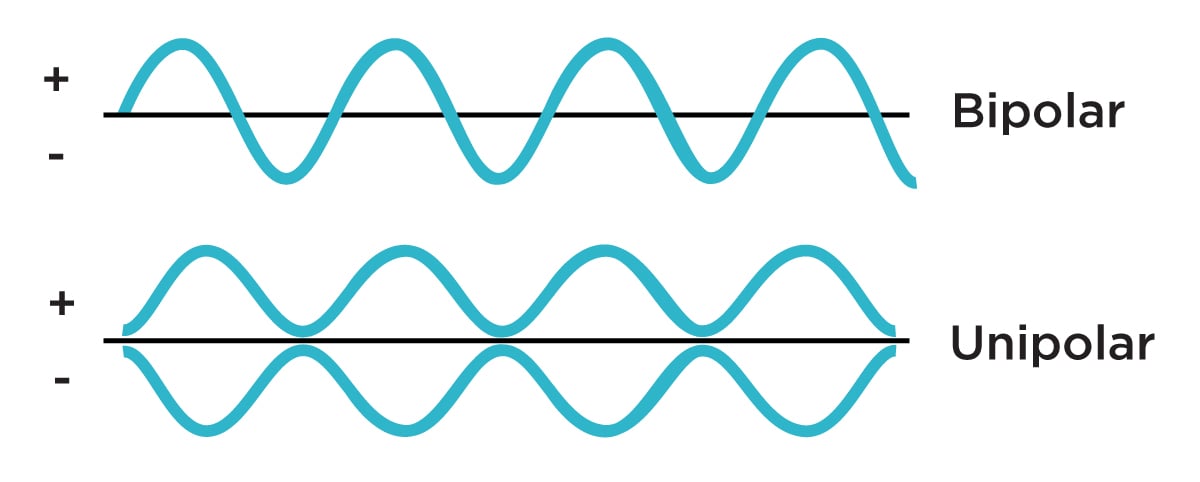

Polarity is another important concept to sound synthesis. Since signals can be represented as both positive and negative voltages, understanding the directionality of a voltage is essential to controlling specific parameters. Despite the fact that there is no fixed voltage standard, most synthesizers operate somewhere around +/-15 volts; the Eurorack format lives comfortably within +/-12 volts. Unipolar CV signals are usually contained within 0–10 volts range, while bipolar ones commonly reside between -5 and 5 volts. Gates and triggers output a fixed voltage when activated, which varies drastically between manufacturers, ranging from 2–10 volts.

Note that CV/gate is an analog solution to synthesizer control. There are two other methods of interaction that were born with the evolution of digital technologies: MIDI and OSC. MIDI (Musical Instrument Digital Interface) is an industry standardized communication protocol developed in the early ’80s, that replaced several manufacturer-specific protocols, including Roland's DCB (Digital Control Bus) and others.

Most modern pieces of music (and occasionally video and light) hardware and software have MIDI protocol implemented in their architecture. It is probably the most widely used method of control and communication for electronic music instruments to this day. OSC (Open Sound Control) is an immensely powerful network-based communication protocol developed by Matt Wright and Adrian Freed. Though not as widespread, Open Sound Control offers a ton of advantages over MIDI, specifically in terms of resolution, flexibility, accuracy, and interoperability.

All three—CV/Gate, MIDI, and OSC are, for the most part, interchangeable. They simply represent different paths to achieving similar sonic results, with each having particular strengths and weaknesses.

Now, let’s dig a little deeper into the four subcategories that we’ve touched upon earlier in the text.

Generators

As you probably already know, there are many different sound synthesis techniques—subtractive, additive, granular, frequency modulation, phase modulation, and so on. For this reason, the choice of a type of sound source plays a definitive role for both the synthesis process and the resulting sound itself. For example, in the context of additive synthesis we need to mix a number of sine waves together in various proportions to achieve a complex wave shape, while the subtractive method calls for filtering out partials from harmonically rich waveforms. Samplers, granular synthesizers, and convolution algorithms are examples of techniques that implement pre- and/or live-recorded acoustic and electronic sounds.

Oscillators come in many shapes and forms, but the primary mechanics are very similar from model to model. By definition, an oscillation is a repetitive fluctuation of voltages at a specified rate. In our case, the outcome is a sound. All oscillators share similar foundational parameters.

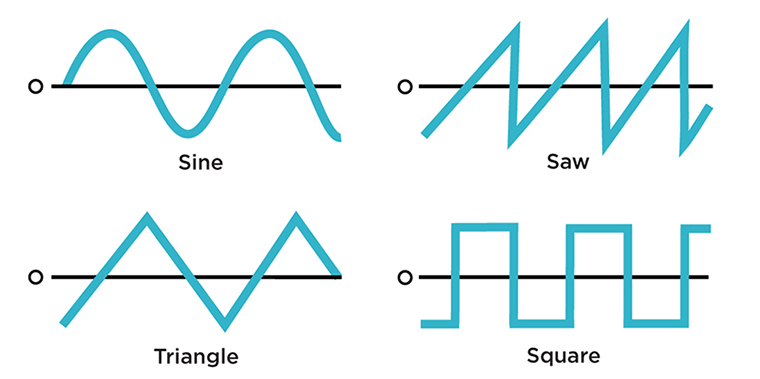

A waveform shape is usually a selectable variable that determines the shape of the oscillations and directly translates into the timbral characteristics of the sound. The most common waveshapes found in synthesizers are square, triangle, sawtooth and sine.

Technically, the total number of possible waveforms is limitless, as taking a single cycle snapshot of any audio signal and making it oscillate will deliver new timbral structures, which is what wavetable synthesis and sampling are all about.

Frequency specifies the speed of the oscillations measured in Hertz (cycles/second) and is related to the perceived pitch. Often, it is represented by two control knobs: coarse for a rough setting and fine for more detailed tuning. Occasionally an oscillator has an octave switch for narrowing the frequency band even further.

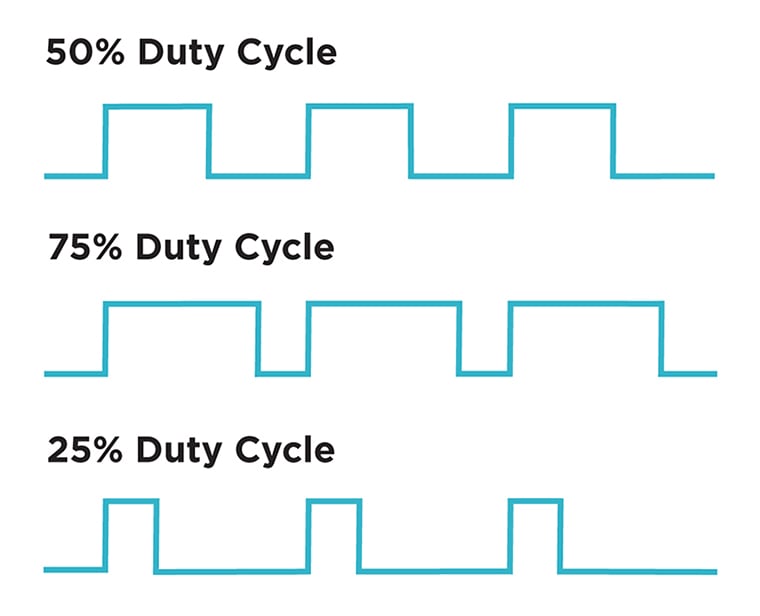

When square shapes are used as the oscillator waveforms, a parameter called duty cycle applies, which defines the ratio between the pulse width and the period of a cycle.

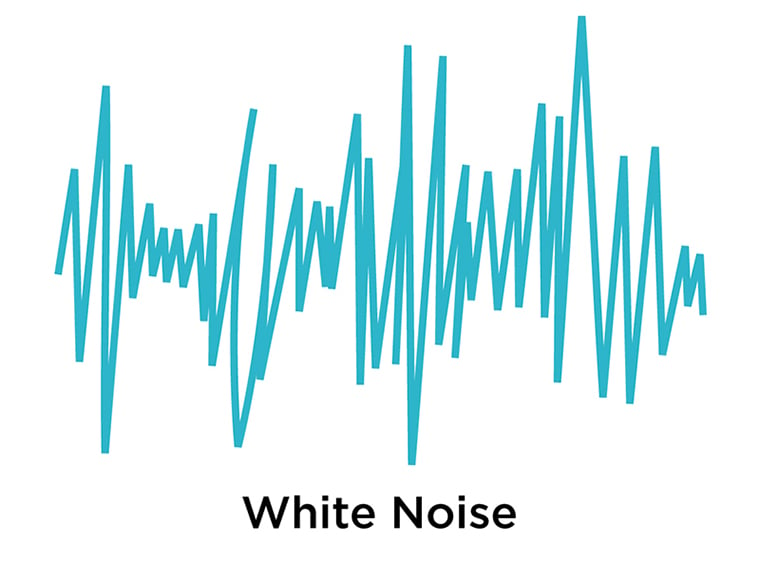

Noise can often be found as one of the selectable waveforms in an oscillator section, but frequently it is offset to a separate section in a synthesizer, and for good reasons. Structurally, noise is the antithesis of the periodic waveform and as a result is very different from an oscillator. We often use synesthetic language to describe the sonic qualities of noise circuits through color references: pink, white, gray, violet.

In synthesis techniques we use noise both audibly—to add more natural qualities to the sound, and, pairing it with other synth circuits, for control purposes—sample & holds and random voltage generators often use noise as a seed to produce non-repeating voltage fluctuations.

The last variant of a generator that we are going to look into is a set of devices that deal with the recorded audio as a source, such as samplers and granular playback engines. These can easily work with waveforms longer than a single cycle, opening up a ton of possibilities for sonic complexity. Playback can generally be variably looped or triggered as a single-shot. Sample start and end times are relevant parameters found in pretty much all related devices.

Modulators

Without modulators, synthesizers would not be even remotely as exciting as they are. This category represents the crucial control elements with which we sculpt new sounds and influence their evolution over time. Modulators can be as simple as a controller slider assigned to some specific parameter of the synth, or as complex as a multichannel sequencer that acts as something akin to the electronic version of the conductor’s baton for navigating the orchestra of circuits.

One of the most well-known modulation types is the LFO (low frequency oscillator). It is exactly what it sounds like—an oscillator with the frequency range set below or around the threshold of human hearing. We use LFOs to autonomously control synthesizer parameters. One example of this application is a generic vibrato effect, which is really nothing more than a simple amplitude-limited modulation of the oscillator’s pitch via another oscillator running at a very low frequency.

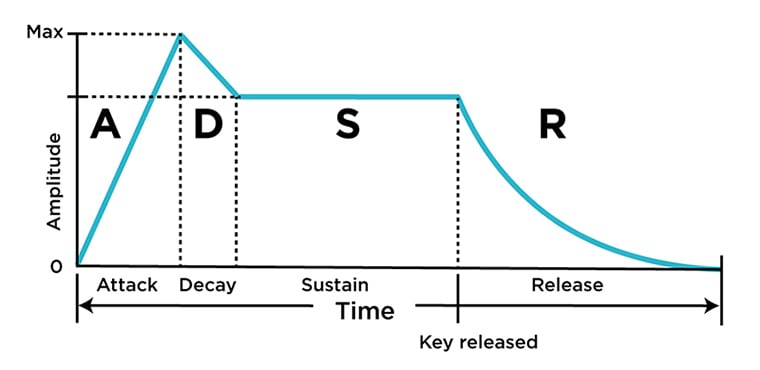

Envelope Generators (EG) define the pathway for selected synth parameters to change over time. Drones can be turned into drums, and static “lifeless” tones become animated and sonically interesting. At the most basic level, an envelope is applied to the amplitude of the sound, facilitating an executive decision on whether the result is short and percussive, or long and tonal.

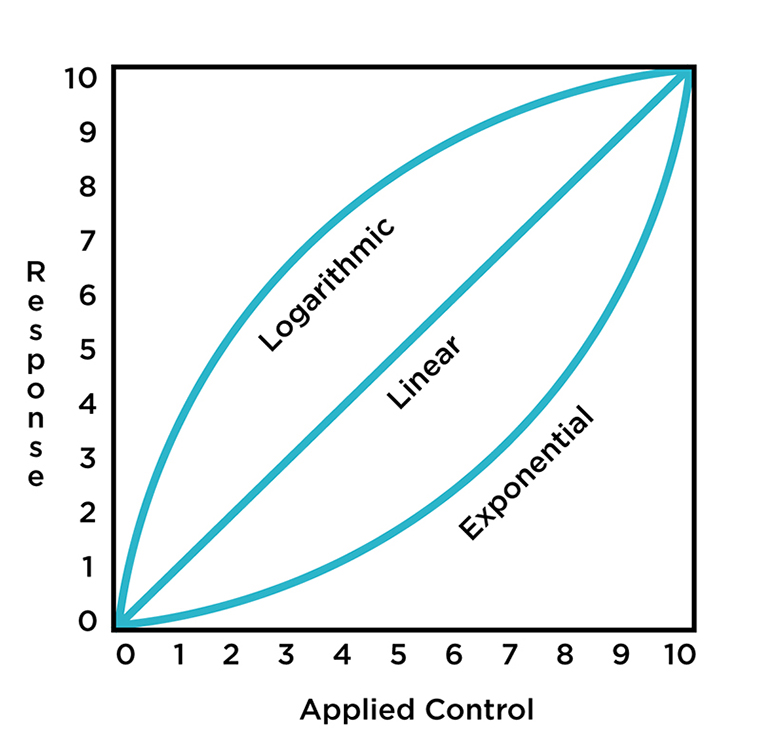

In reality, envelopes are immensely useful for all sorts of parameter control applications: an envelope is simply a trajectory for how parts of a sound unfold over time. The trajectory is further shaped by the curvature response of the envelope, which can be either linear, exponential or logarithmic.

We often need to activate envelopes with a trigger or gate, but some have an option to self-cycle, which makes them functionally similar to LFOs. Envelopes normally consist of a few segments of voltage paths or stages, which can be arranged in a glut of possible combinations—ADSR, AR, ASR, AD, AHR, and so on.

Attack specifies the time it takes the signal to go from its initial state to its maximum output level. Decay is the amount of time it takes for an envelope to go from its peak, reached during the attack stage, to the next phase. Sustain is the level at which the sound is held until the key is released. Release is usually the final stage of the envelope that defines how long it takes for the envelope to reach its original state after the input gate returns to zero. Occasionally you will also see an additional Hold stage, which basically allows you the specify the time for the peak stage to be held before it moves on to decay.

Random voltage generators are units that harness unpredictability and chance to facilitate music creation and sound design on several different levels. As the name suggests, they generate indiscriminate voltages. The possible usage can span from being very mild, as to “liven up” the sonic textures a bit, to something much more radical, as to control and interfere in the process of composition itself.

The most well-known random voltage generator type of unit is the sample & hold. S&H circuits are designed to receive an external signal (often noise) into its input and sample the voltage values at a specified clock rate. The resulting stepped output can be further smoothed out with a slew limiter if gradual value transitions are preferable.

Unlike acoustic instruments, synthesizers have an incredibly wide array of ways to control them. The most conventional controller type is a piano-style keyboard. To this day, the black-and-white key combo remains perhaps the most utilized way of interacting with the instrument, which makes sense given that this familiar interface is exactly the thing that was responsible for the popularization of the synthesizers in the first place—after all, we might not be where we are today without the Minimoog or ARP Odyssey.

A sequencer is another common tool for arranging electronic sounds temporarily. Alternatively to the keyboard method, we usually treat sequencers as quasi command centers, where we structure musical phrases and compositions and let the machine perform them. Keyboards and sequencers are often used in tandem.

There are, of course, many other ways to interact with a synthesizer. Capacitive touch plate surfaces, joysticks, various digital and analog sensors, and, conceivably, traditional instruments such as guitars are all potentially powerful control sources. These are still treated as rather experimental, but they are incredibly fun to play with and always return results that would be difficult, if not impossible, to achieve otherwise.

Processors

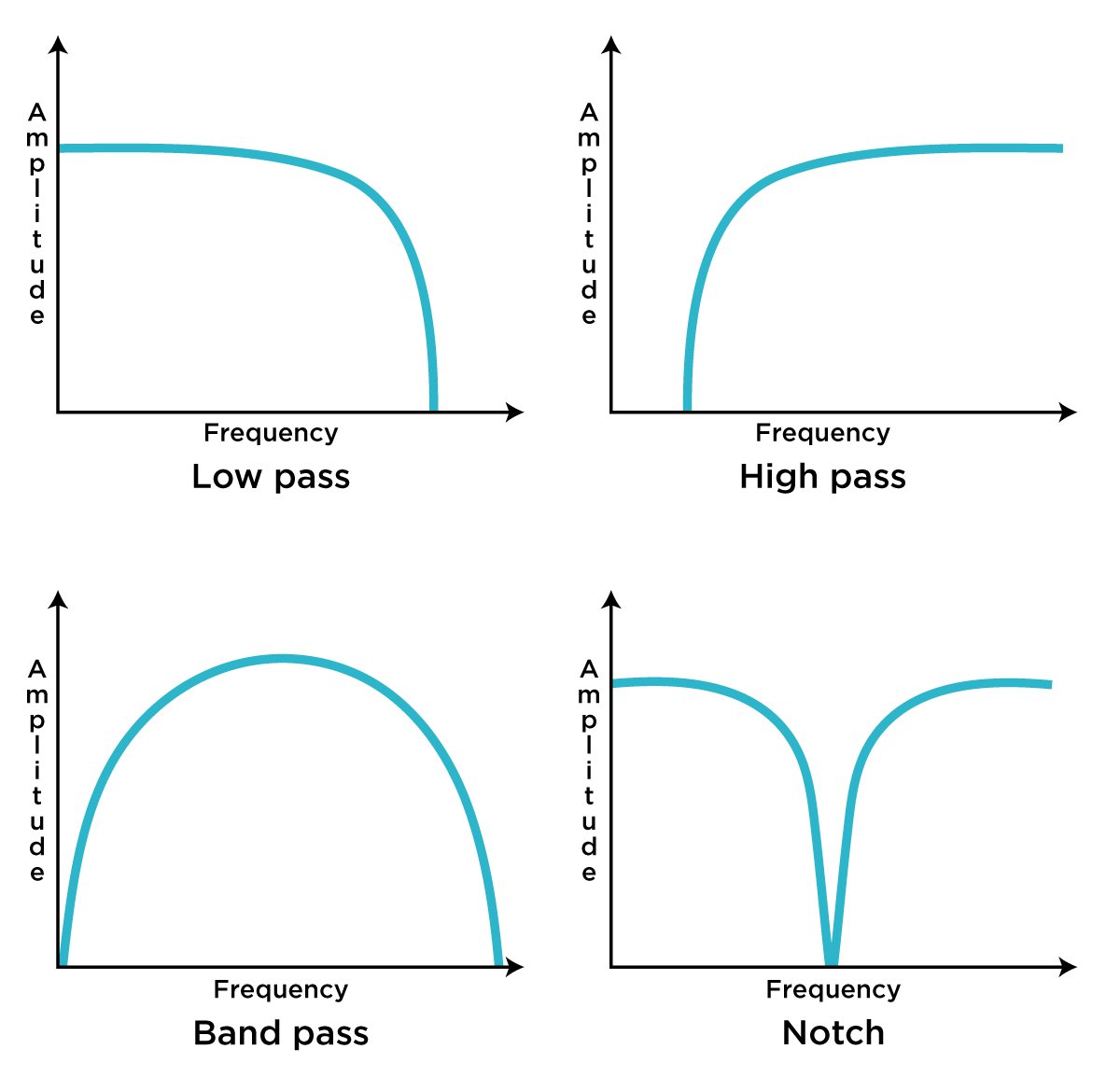

The palette of possibilities for modification and alteration of signals is humongous. Filters are probably the first category that comes to mind in relation to synthesizers. A close relative to an equalizer, the core purpose of a filter is to alter the harmonic content of a sound wave, whether it means attenuating certain partials, boosting a narrow band of frequencies, or entirely removing a chunk of spectral content. It is important to mention that the creative application of filters extends way beyond their utilitarian functionality and we will explore these in future.

There are four most common filter types. Low pass filter attenuates high frequencies at a cutoff position. High pass does pretty much the opposite, preserving high-frequency spectral content and removing the “lows”. Bandpass filter accentuates a specified frequency spectrum, which sometimes has a variable bandwidth. Finally, notch filter allows for all frequencies to pass, except for a specified narrow band.

Wavefolding is another tool that can alter the spectral content of a wave. It is usually attributed to the West Coast synthesis techniques, first appearing commercially in instruments from Buchla & Associates and Serge Modular Music Systems. Unlike filters, which usually need harmonically rich sounds at the base, wavefolders shine when applied to simple sounds, such as pure sine waves. Rather than cutting off a signal, as is the case with filters, wavefolders aim to clip it by inverting the amplitude peaks into a series of folds. This results in harmonically complex, sometimes quite aggressive timbres.

Time-based effects are a group of processors that includes delays, reverbs, choruses, phasers, pitch-shifters, and harmonizers, etc. By nature, these processors manipulate the sound in its relation to time and space. Sounds can change unrecognizably when passed through one of these processors. Completely changing the source sound is not the only application of these effects, as often they render themselves to be useful when we want to imply certain spatial characteristics related to the sound. The intricacies and the full scope of time based signal processors will be explored in depth in future episodes.

Utilities

Utilities are perhaps the most overlooked type of module, but without them, most patches would simply fall apart. They include voltage controlled amplifiers (VCAs), mixers, signal attenuators and polarizers, switches and routers, logic circuits, and quantizers. We need utilities to “glue” our patches together.

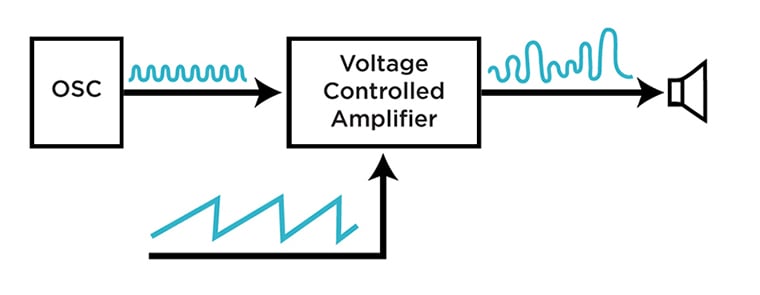

For example, VCAs let us control the amplitude of signals using voltage. This applies to both audio signals and control voltages. Attenuators and polarizers help us dial the amount and, respectively, the direction of modulation. Mixers are used to combine multiple signals together. Logic operations are useful when we want to program specific behavior within a patch, i.e. deriving minimum or maximum voltage value out of several sources, triggering an event when the signal reaches a certain threshold value and more. Switches and routers help us direct signals to different places at the turn of a knob (or push of a button). With quantizers, we can turn random voltages and other signal sources into melodic sequences.

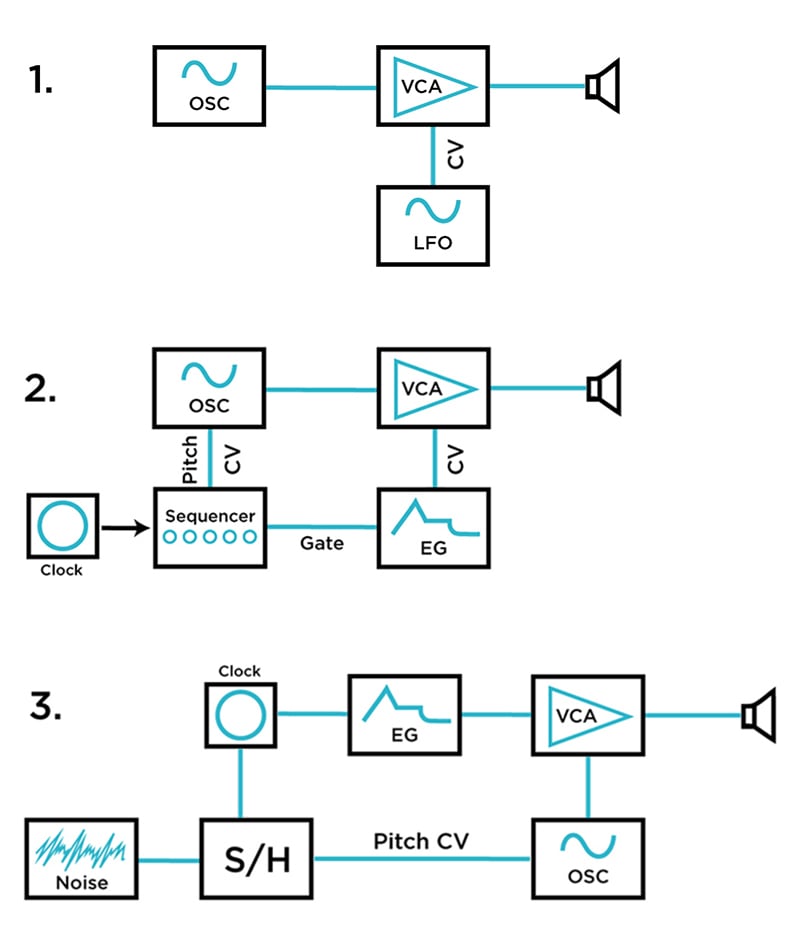

Below are examples of basic synthesizer patches

In the first patch, a carrier oscillator is sent into a voltage controlled amplifier input. The second oscillator (modulator), running at a low frequency rate, controls the amplitude of the VCA. As a result, we hear a tone with voltage-controlled alternating loudness amplitude. At higher frequency rates of the modulating oscillator, the timber of the carrier is drastically transformed. This technique is known as amplitude modulation (AM).

The second patch represents one of the most common ways of interconnecting synthesizer modules. As in the first example, the oscillator is routed into a VCA, which in this case is controlled by the envelope generator. The sequencer simultaneously sends a gate signal to activate the EG and a CV signal to control the frequency parameter of the oscillator. The sequencer speed is determined by the master clock unit. Consequently, we get a system with a shapeable loudness contour, capable of generating melodies. The sequencer can easily be replaced by the controller keyboard if a more manual approach to generating sound is preferred.

Lastly, we have a random melody generator with a sample & hold module taking the central position in the patch. A master clock both triggers an envelope generator connected to the VCA and sets the sampling interval time of the S+H. White noise is fed into the input of the sample & hold module, acting as a source of random values. The resulting stepped random voltage fluctuations control the frequency of the oscillator. The output of the oscillator is sent into the voltage controlled amplifier. The resulting sound will be familiar to anybody who ever witnessed an old sci-fi film.

Interchangeability of signals

An important fact to note is that, while MIDI and OSC protocols offer a great level of parameter control and, by their digital nature, can transmit data wirelessly, signal types are mutually exclusive and can’t be directly substituted one for another. There is a clear line between the sound itself versus the note on/off and the CC data. In the realm of analog CV/gate, this is not the case, as all signals—the output of an oscillator, the output of the envelope that shapes its amplitude, and the trigger that activates the envelope are equally interchangeable. This facilitates a massive playground for interactivity between different circuits and opens up their capacity for mutations from one category to another. This is what makes modular synthesis stand out as the most versatile and flexible way to engage with sound synthesis.

As you can see, the field that we’ve approached is remarkably wide. Hopefully, this article provided enough information to inspire you to dive deeper. The depths of sonic worlds are beautiful and we are excited to share our knowledge, experience and passion for the subject. Stay tuned for more!