Musical notation is a remarkable invention. Through music notation we can take complex and ephemeral patterns of sound in time and preserve it in a repeatable way. It’s amazing that, through music notation, we know what the music of the medieval ars antiqua style sounded like over 800 years ago, even though virtually all the composers’ biographical details have been lost.

But not every style of music is notated, and some kinds of music seem easier to notate than others. It’s a question that often arises on modular synthesizer forums and user groups: How could modular synthesizer music be notated? Is it possible or even desirable to attempt to notate music for modular instruments?

What’s So Special About Music Notation Anyway?

One special characteristic of music notation is “work preservation.” Work preservation is the ability notation has to “press save” on a particular musical work.

In classical music, notated scores are assumed to have a very high degree of work preservation. If you hear Richard Wagner’s “Ride of the Valkyries” at the opera house, it’s assumed to be the very same “Ride of the Valkyries” that you hear in Francis Ford Coppola's Apocalypse Now. The context is completely different. The performers are different. But a classical musician would think of these as the same work. The notation here is the work in a sense, and the musicians’ job is to reproduce the score as faithfully as possible.

On the other hand, many styles of music assume notation has little or no power of work preservation. Keith Jarrett’s improvised Köln Concert was later published as an authorized transcription. Other artists have since recorded the “Köln Concert.” However, these recordings are not Keith Jarrett’s Köln Concert, in a similar way to how jazz standards are covered rather than performed. In jazz, as in many other styles, the essence of a work has as much to do with the artist’s particular performance as it does with the notation, if any.

American philosopher—and part-time art dealer—Nelson Goodman was one of the first philosophers to take music notation seriously, at a time when language had already been a favorite subject of academic philosophy for decades. In Languages of Art (1968) Goodman described a theory of musical notation that aims to show how well-constructed notation systems can distinguish valid performances of a work from garbled ones. For Goodman, notational systems must fulfill five criteria. These criteria include things like disjointness (a symbol refers to one and only one type of thing) and finite differentiation (characters are distinct from one another, and it’s possible to tell to which, if any character, a mark refers).

According to these standards, work preservation is ensured when a work is notated in compliance with these criteria and when the performance of the work accurately reproduces all the things described by the notation. That’s a high bar to apply to any musical work! And while Goodman assumes too much rigidity in what constitutes proper identification of a musical work, perhaps his greatest contribution to music notation has actually been to contextualize notation in general. Through his study of notation systems, Goodman pointed out how music notation is just one of many notational systems, each of which is designed to highlight different types of features and support different types of usage. We can think of music notation alongside, for example:

- Tablature

- Dance notation (Labanotation, Benesh Movement Notation, etc.)

- Chess notation

- Scientific charts and graphs

- Maps and signs

- And even pictographic assembly instructions for your favorite Swedish multinational furniture conglomerate

Each of these systems of notation use different strategies to preserve some kinds of information better than others. If we think of these notations in terms of music, we might think of them as prioritizing different strategies for work preservation.

Why Is It Hard to Notate for Modular Synthesizers?

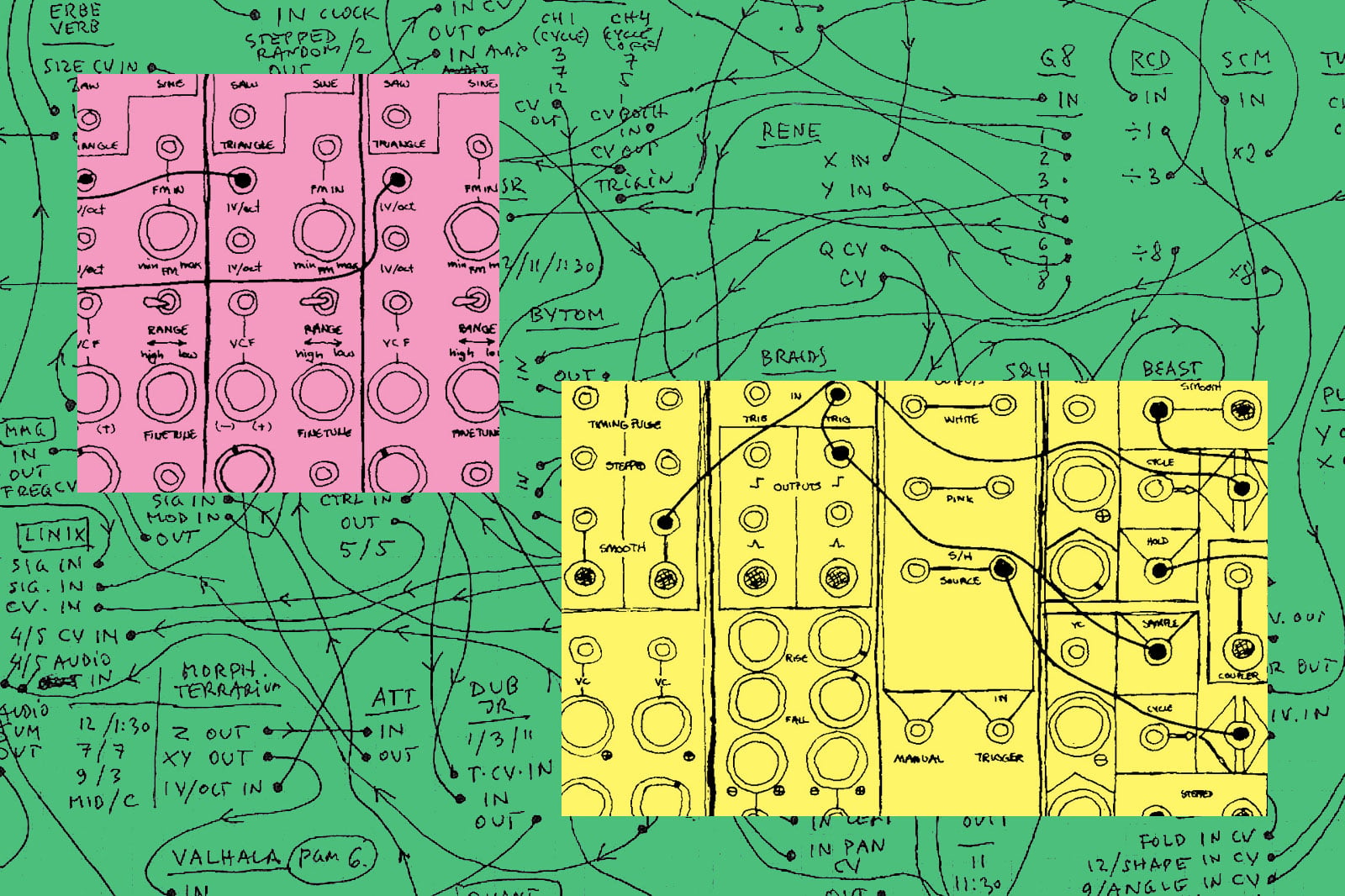

If you’ve ever played a modular synthesizer, the answer to why it’s hard to notate music for modular synthesizers might seem obvious. First of all, there’s just a lot of stuff going on with a modular system! You’ve got dozens of knobs, patch cords, buttons, switches—and if you’re using digital modules—likely there will also be menus, presets, and internal settings. That’s a lot to write down! Additionally some modules intentionally use random or chaotic voltage states (or their pseudo-random digital equivalents) which might not be reproducible at all.

In extremely small modular patches, with only a few simple circuits, replication of a patch or score is sometimes theoretically possible. But as the complexity of the patch increases, it becomes increasingly difficult to replicate a prior patch in a way that sounds characteristic of the original. Even with excellent temperature compensation, analog oscillators respond differently at different temperatures. Dials are set to minutely different levels. And these changes propagate and amplify as voltages interact, leading to different sounds each time a patch is recreated.

Modular notation, if it is to have any relevance, must take into account both the complexity and the inherent ephemerality of the voltage states in the instrument.

[Figure 1 - Notes for a modular synthesizer patch, credit Piotr Szyhalski / Labor Camp]

Beyond simple MIDI piano rolls, familiar forms of music notation often just don’t seem well-suited to electronic music. There’s a reason for that. What we think of as classical (or “common practice”) musical notation really took off in seventeenth-century Europe right alongside the development of keyboard instruments. Instruments like the piano, pipe organ, and harpsichord had a big influence on early notation. The five lines and four spaces of the musical staff work really well for notating scales tuned to equal (12-TET) temperament. It works less well for notating instruments that can glide between pitches and modulate their tone (string instruments, the human voice, etc.). And it works even less well when we start introducing timbre (the harmonic spectrum or “texture” of a sound) as an important variable.

While staff notation is the most familiar form of music notation for many people, the original purpose of this notation system, and the aspects of sound it was designed to prioritize, often feel quite distant from the experience of modulating voltage within an electronic instrument. But what if we take our inspiration from Nelson Goodman and look at the wider world of notations?

Notating Beyond Five Lines

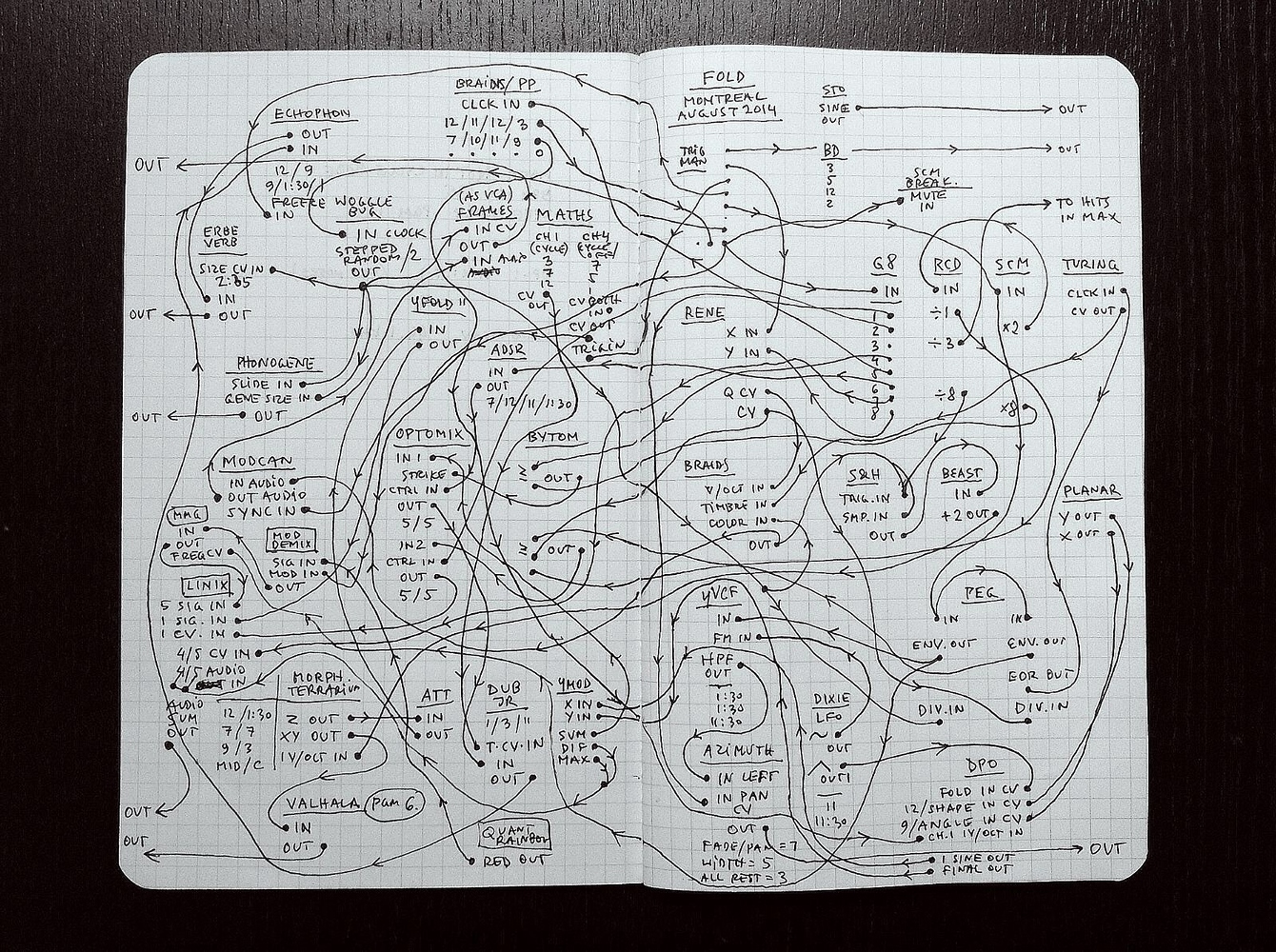

In the 20th century, composers started to stretch music notation to its absolute limits. Notating percussion, sound effects, and “extended techniques,” where musicians play their instruments in unusual ways, required the invention of more and more complex custom symbols. Some experimental composers started to break away from common-practice notation entirely and invent their own systems, including so-called “graphic notation,” which uses invented pictograms to represent some aspect of the performance, not necessarily symbolically. Some scores by, for example, John Cage or Cornelius Cardew, look more like star maps or alchemical symbols than musical notation. In the 21st century, composers like Aaron Cassidy and Timothy McCormack have experimented with invented “tablature” notations which show what movements and actions the performer should make rather than what the resulting music should sound like.

[Figure 2 - Page from the author’s score for raycaster (2019) showing electronic sounds, invented tablature-style notation for prepared electric guitar, prepared piano, et al., and patch-sheet-style notation for guitar pedals.]

The attempt to notate electronic music started more or less as soon as there was such a thing as electronic music. Romanian-Hungarian-Austrian composer György Ligeti approved the creation of a “listening score” for his 1958 electronic composition Artikulation. The score, created by graphic designer Rainer Wehinger, uses a friendly collection of colorful symbols to represent the “shape” of bleeps and bloops and even white noise.

Taking a vastly different approach, American composer John Cage published a 193-page score for Williams Mix (1951–1953) which simply consists of graphics showing how and where to cut and splice eight tracks of 1/4-inch magnetic tape. The composer described the score as being like a “dressmaker's pattern.”

Notating Wiggles

Patchable synthesizers, with their intricate control interfaces, have inspired efforts to devise novel notation systems since the early days of commercial synthesis. The pioneering VCS3, introduced by British company EMS in 1969, was a groundbreaking instrument in this regard. An early portable, patchable synthesizer, it quickly established a significant presence in the music industry, embraced by prominent bands and artists including Pink Floyd, King Crimson, and Brian Eno. Contrary to later EMS offerings like the Synthi AKS that included a keyboard, the original VCS3 notably lacked this feature. Instead, it relied on an iconic routing matrix as its primary method of control. To aid users in recording and recreating their patches, the VCS3 came with a patch sheet, a.k.a. "dope sheet." This was essentially a graphic of the synthesizer's faceplate and matrix, intended for users to note down their settings. The dial positions could be sketched directly onto this sheet, and the design of the routing matrix facilitated users to swiftly denote the placement of pins within the matrix. This had a distinctive advantage for preserving settings, particularly when compared to brands like Moog that utilized patch cables, thus making the VCS3's notation system more user-friendly and efficient.

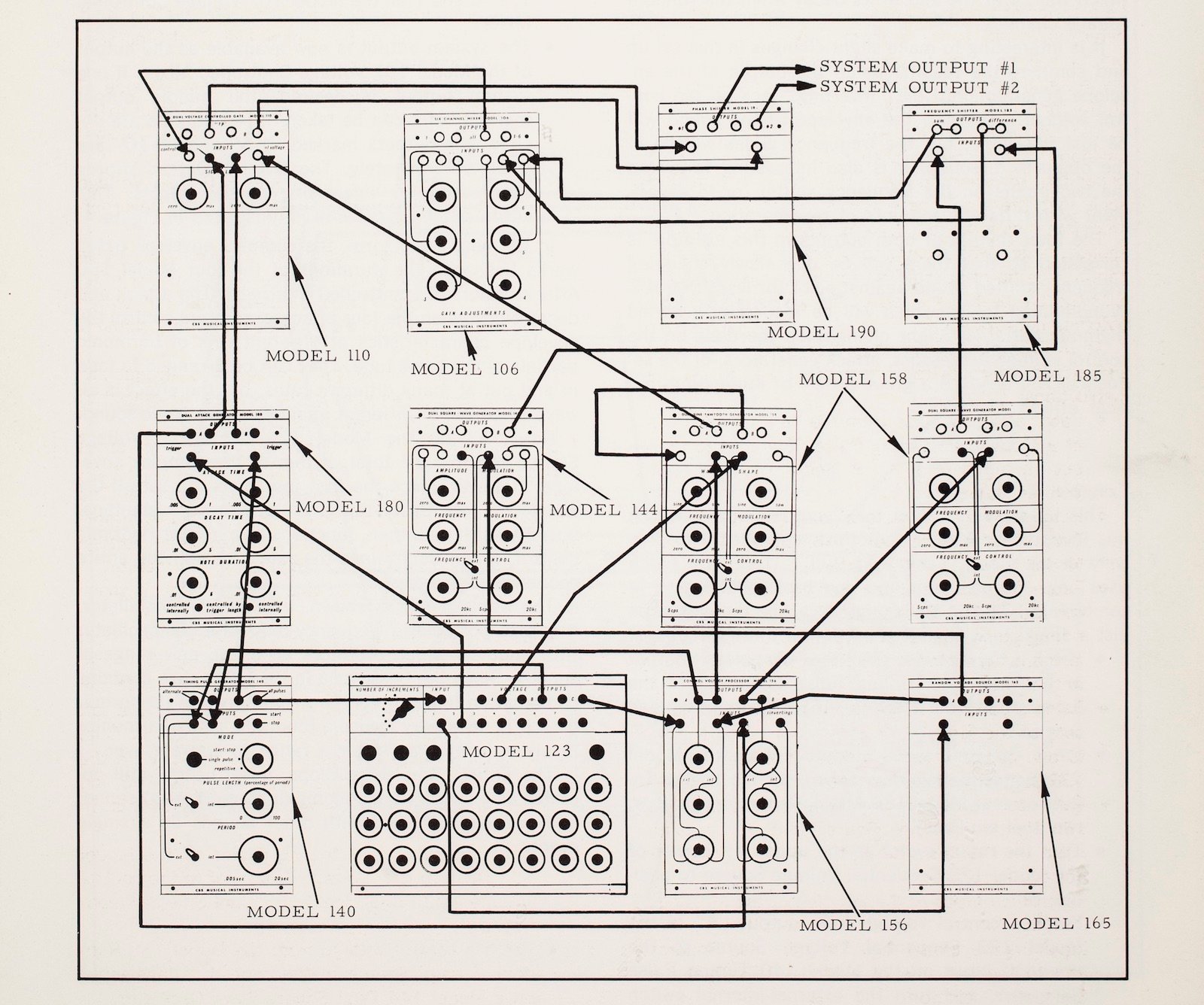

[Figure 3 - a complex patch diagram from the Buchla 100 user manual.]

American synthesizer manufacturers, including Moog and Buchla, embraced variations of the patch sheet system as well, incorporating indications for the positioning of patch cords. For instance, the booklet accompanying the Buchla 100 Series synthesizer modules featured two large, A3-sized patch sheets for the user to copy and use. Additionally, the comprehensive manual included with the Buchla system contained an array of detailed patch sheets pre-marked with patch indications. These sheets provided intricate configurations such as a patch showcasing a complex envelope, an innovation credited to composer Morton Subotnick.

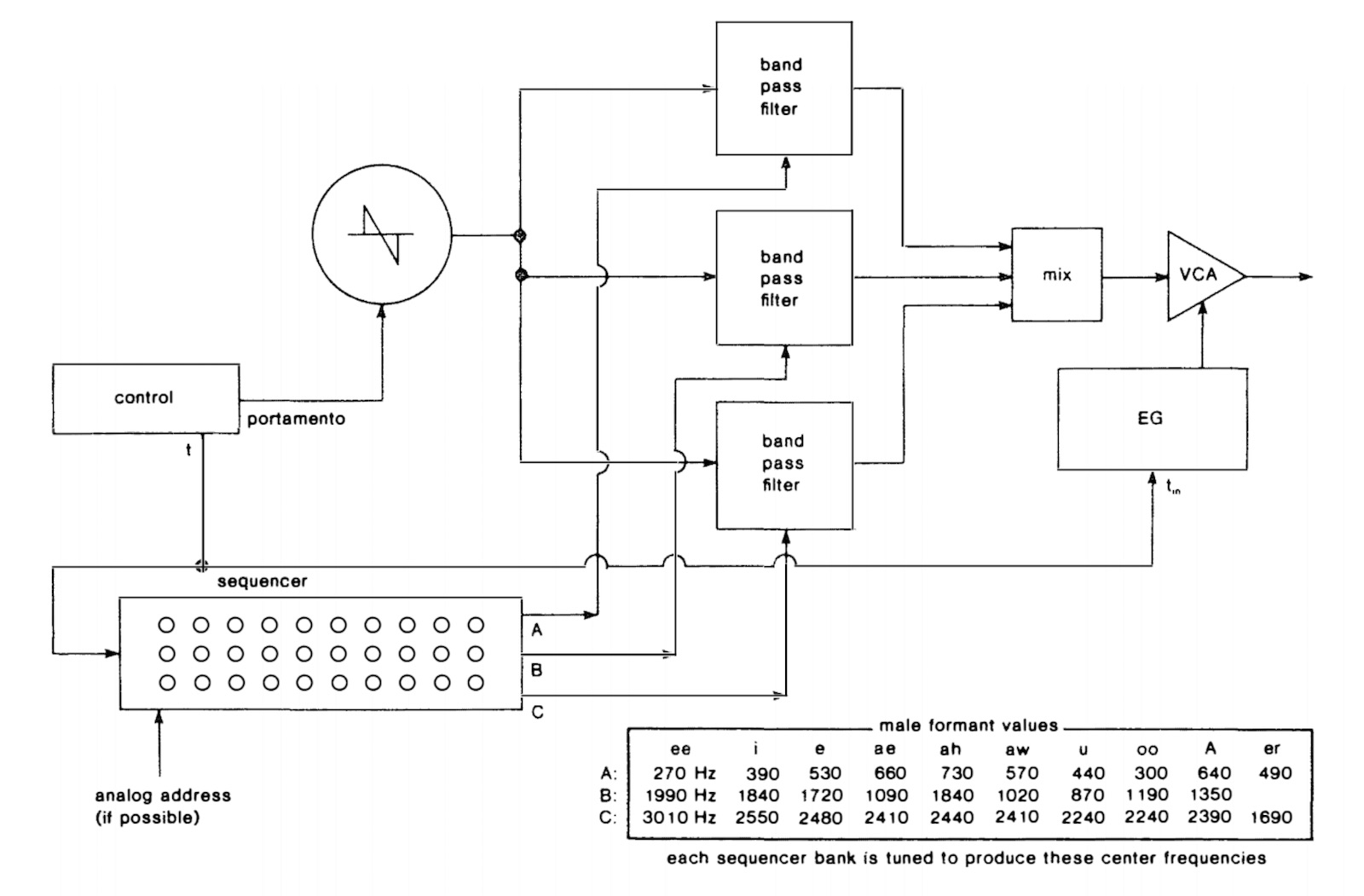

While patch sheets serve as an effective tool to notate the configuration of a singular modular system, there arose a need for a notation system that could document the structure of patches which could be built on multiple, similarly equipped modular setups. The solution came in the form of flow-chart-like graphics representing the basic building blocks of synthesis, an approach later dubbed “block schematics” by Rob Hordijk. Each block represented a basic function of a signal path—a filter, mixer, LFO, etc.—together with some simple information about that function. These symbolic blocks are connected with lines to show the flow of audio or voltage through the system. The symbolic blocks can further be annotated with text, staff notation, or waveform graphics. The system can easily be expanded to also show external input sources or new types of circuits.

This notation style was widely utilized for patch notation in Allen Strange's influential 1972 book, Electronic Music: Systems, Techniques, and Controls. By getting away from specific modular panel designs and documenting more transferable concepts such as oscillator pitch, filter cutoff, and level of attenuation in relation to each other, block schematics enhance the versatility and communicability of modular synthesizer music.

[Figure 4 - a patch diagram from Allen Strange's Electronic Music: Systems, Techniques, and Controls]

A common characteristic of both patch sheets and block schematics is their emphasis on preserving the configuration of the synthesizer patch rather than documenting the specific performance techniques that unfold over time. We might have a good understanding of how to set up a modular system after viewing a fully documented patch layout, but we don’t have any idea how the performer might turn the knobs or push the sliders during a performance. We don’t get a sense for how tactile interfaces like joysticks, capacitive touch plates, or other sensors might be used to shape the composition over time. A patch sheet for Pink Floyd’s “On the Run” would tell you a lot about how the Synthi AKS was set up for the track, but it wouldn’t include enough information to get close to recreating the track or even just recreating the synth part of the track. This is a major difference from common practice music notation.

Indeed, some modular artists view the patch itself as the artwork, a perspective that offers a distinctive approach to work preservation. This concept has grown in popularity in tandem with the rising interest in generative and algorithmic art. A radical embodiment of this "process as work" notion is David Chesworth's 1978 album, The Unattended Serge. The album is a collection of excerpts from a 24-hour-long recording of a patch on the Serge synthesizer at La Trobe University in Melbourne, which was left to run without intervention and was later trimmed down to fit the duration of an album. This approach presents the continuous, unattended operation of the synthesizer as the essence of the creative work itself, thereby extending the boundaries of conventional notions of work preservation.

Photography has also emerged as a popular method of notation. In earlier decades, it was common for artists to use a Polaroid image as a quick visual reference of their patched synthesizer. Recently, this practice has evolved to include video documentation, often accompanied by explanatory notes or discussion of the patch. Despite its convenience for swift documentation, video as a form of notation has its limitations. For instance, it can be challenging to discern every detail of a patch amidst a tangle of cords. Additionally, using a video as a performance reference can be problematic, and videos might lack specific indicators to highlight key characteristics of a composition crucial for its accurate reproduction.

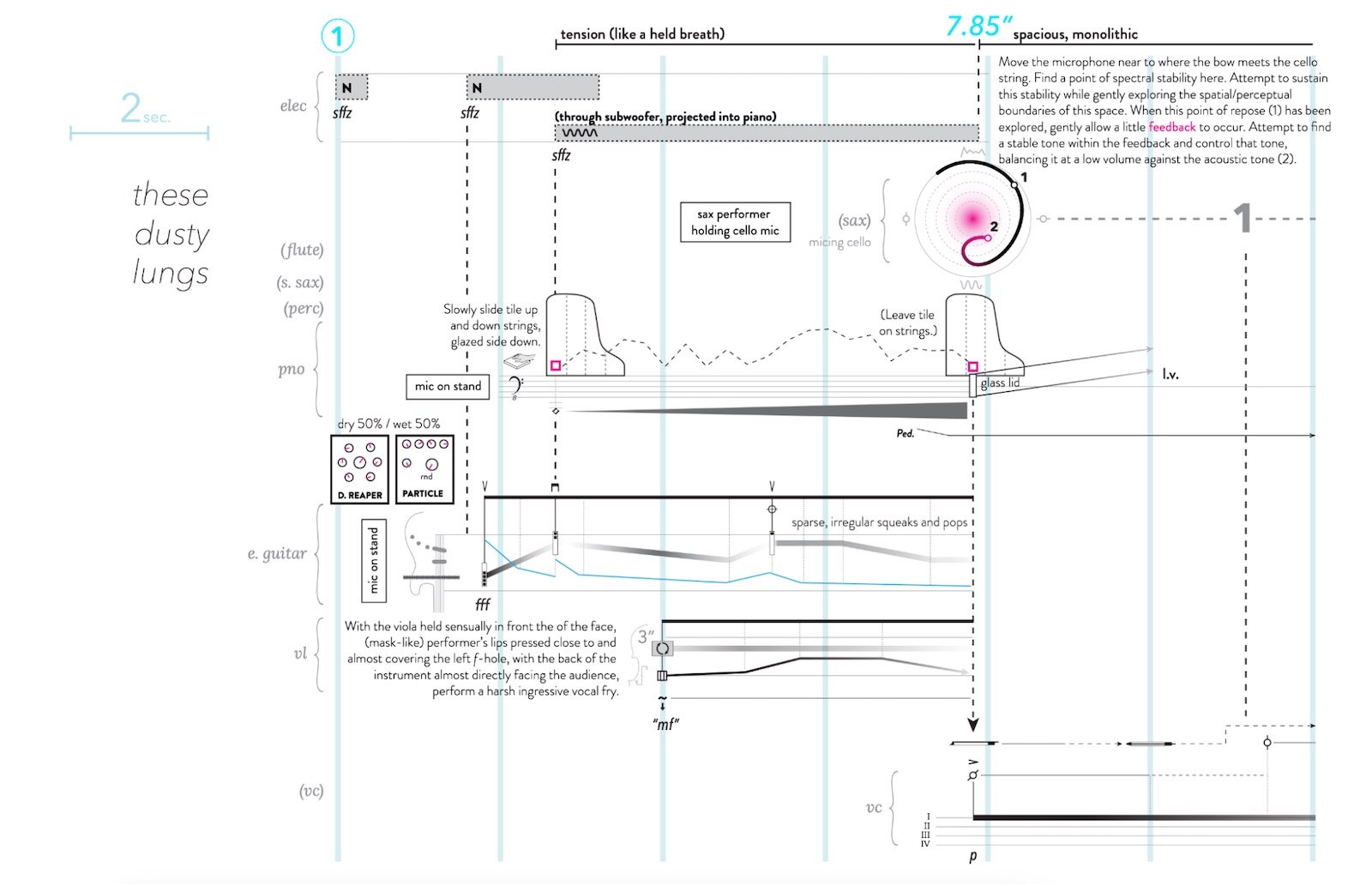

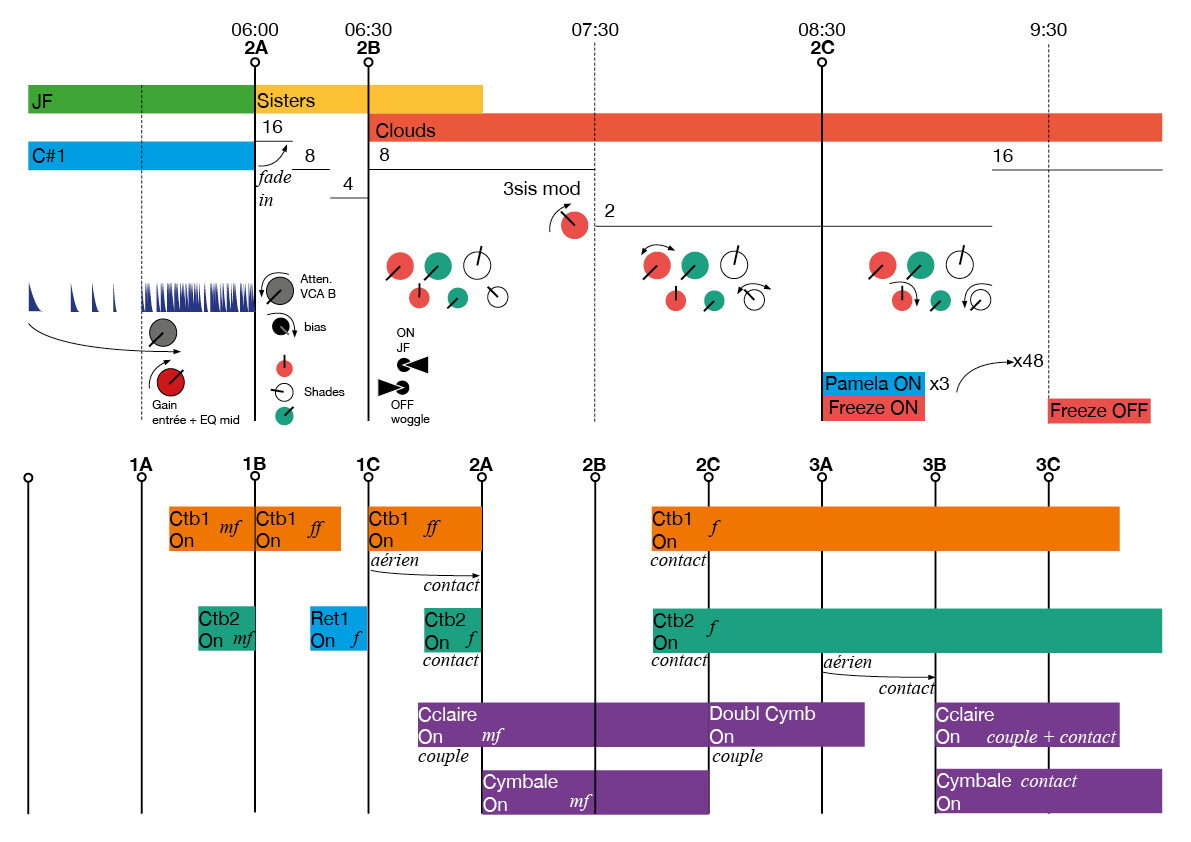

[Figure 5 - Excerpt from the score for Seuils (2018) for modular synth, two double basses and percussion by Antoine Hubineau]

In the 21st century, with the availability of vector graphics editing software like Adobe Illustrator, some composers have moved away from traditional score engraving software to experiment with combining graphical representations of instruments and control services with traditional linear notation. This shift has unveiled a wealth of new opportunities for notating both acoustic and electronic instruments in a manner that is visually evocative yet maintains a familiar linear structure. These contemporary techniques bridge the gap between traditional and modern forms of notation. However, there are usually practical limits on the amount of information a performer can attend to at once, and these limitations become more pronounced when several different layers of notated change and interaction are layered. The challenge is still to come up with a graphical representation that clearly communicates the interaction with the instrument, with the added complexity of showing this interaction over time.

Does Notation Even Matter?

Notation for modular synthesizers continues to be difficult, as each notation system presents various advantages and disadvantages. Whether or not that matters is really a question that must be judged in relation to a particular set of goals.

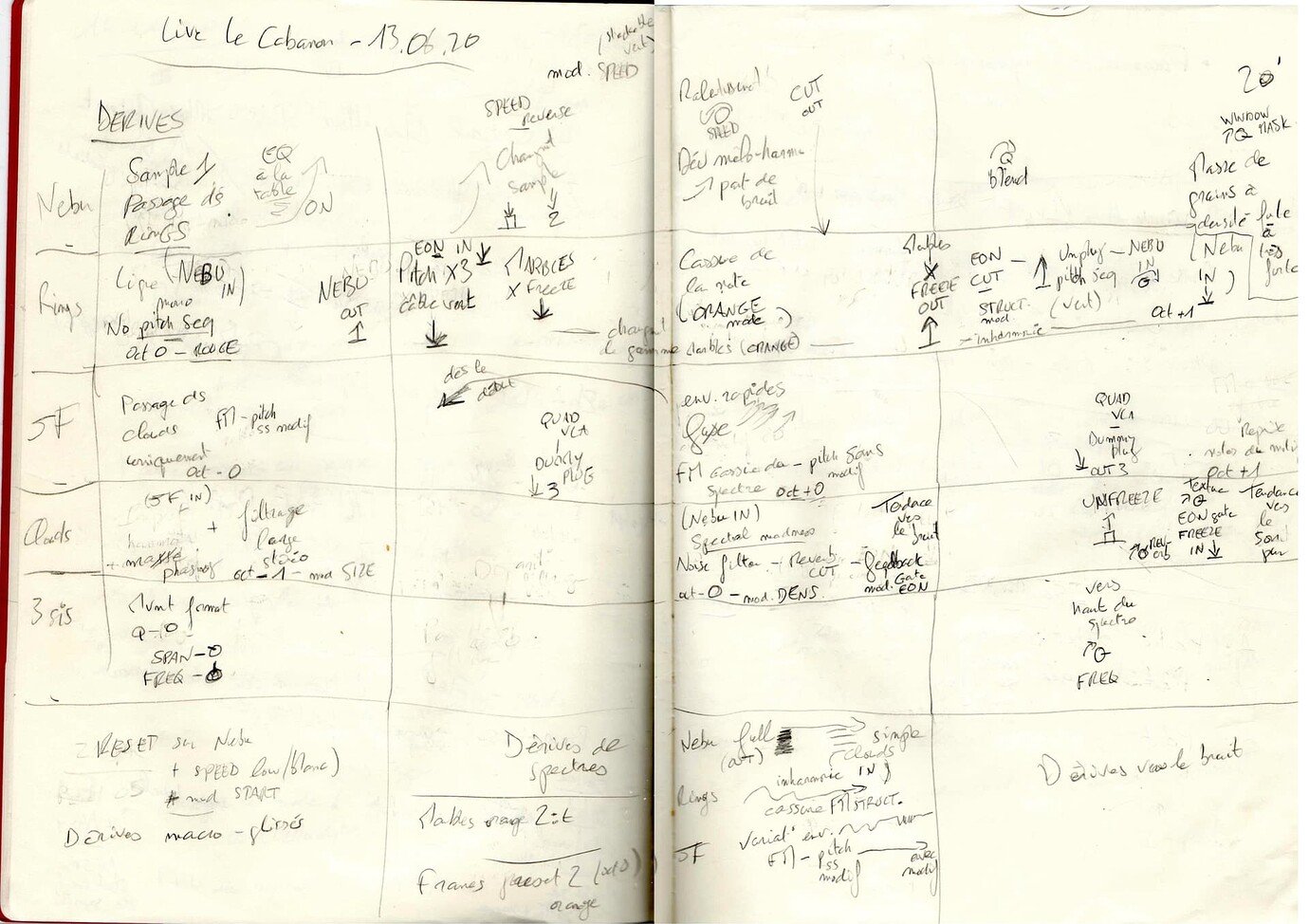

[Figure 6 - Credit Antoine Hubineau, from notes for Le Cabanon]

For artists who care about work preservation, a form of modular notation—although limited—could still be a useful tool for defining the parameters of a composition. However, since work preservation is deeply linked to the design of the chosen notation system, it becomes important to consider what aspects of a composition are important to ensure work preservation. Is it enough to recreate the patch precisely? Is it also necessary to recreate a particular performance or interaction with the patch over time? Must a work be recreated on specific modules, or is the track defined by the interaction of simple functions (oscillators, filters, mixers, etc.) that can be found on many different instruments? If digital modules are used, how can presets be represented, and would someone else’s attempt to recreate a preset be sufficient to recreate the work?

For many artists, work preservation and notation are of little interest, and the ephemerality of voltage in the modular system can be part of the appeal. A modular synth can be thought of less like the 19th-century keyboard for which common practice notation was once defined and more like an unruly object, forcing the performer to contend with uncertainty and explore with the ears rather than impose predefined patterns.

But even if work preservation is not the goal, notation can still be a useful tool for composition or study. Many artists find that making rough diagrams and sketches of a work can help define larger structures within which spontaneity can still be part of the process. As with Ligeti’s Artikulation, creating a notation after the fact, even for an improvised work, can be a useful way to learn about a work’s structure.

Like the ever-evolving landscape of synthesizers, the world of notation for synthesizers is relatively young, with only a few decades of exploration and experimentation. This nascent stage presents a unique opportunity to seek inspiration from the vast realm of graphical design that surrounds us. From the intricate details of nautical charts to the innovative layouts of board games, there is a wealth of visual language waiting to be adapted for synthesizer notation. By embracing this diverse and rich graphical design culture, we can challenge traditional visual metaphors for sound, which in turn frees our imagination to think about sound beyond easily definable parameters of pitch, rhythm, melody, and harmony to explore the full range of form and timbre available in a modular instrument.