The RCA MkII, also known as Victor, was an early synthesizer made by the RCA corporation in 1957 that to this day is housed in the Columbia Computer Music Center—known at the time as the Columbia/Princeton Electronic Music Center (CPEMC). Although it has fallen into disuse, the RCA MkII represented a huge leap forward in the realm of synthesis. It is seven feet tall and 20 feet wide, and weighs three tons. It could be mistaken for one of the computers of the day, but it was specially made to generate music. Composers wrote, or punched instructions for it onto rolls of paper tape that fed through a reader that turned the binary information into musical compositions.

The MkII predated the widespread use of transistors and contained 1,700 vacuum tubes, which were notoriously finicky. And, in some ways, it laid the groundwork for the voltage-controlled synthesizer revolution that occurred in the 1960s with Robert Moog, Don Buchla, and others. The MkII was loved dearly by a few select composers and used to great effect—although it confounded many more.

But why was the RCA MkII created in the first place? And how was it used? Let's dive in.

The Dream of a Music-Making Machine

Inventors Harry F. Olson and Herbert Belar originally sought to develop a machine that composed songs. By automating the creation of popular songs, they thought they could corner the music market for a slew of top hits. They first employed statistical analysis of popular melodies by American composer Stephen Foster in order to synthesize new songs based on the resulting parameters.

One can draw a clear lineage between their efforts and the rise of AI-driven music that has emerged over the past few years, using another artist’s work to program what they thought would be a hit song. It should be noted that Stephen Foster was a composer who was made famous by such songs as "Camptown Races," "Oh! Susanna," and "Old Black Joe." (His songs were regularly performed at racist minstrel shows, with performers often in blackface singing and dancing to them.)

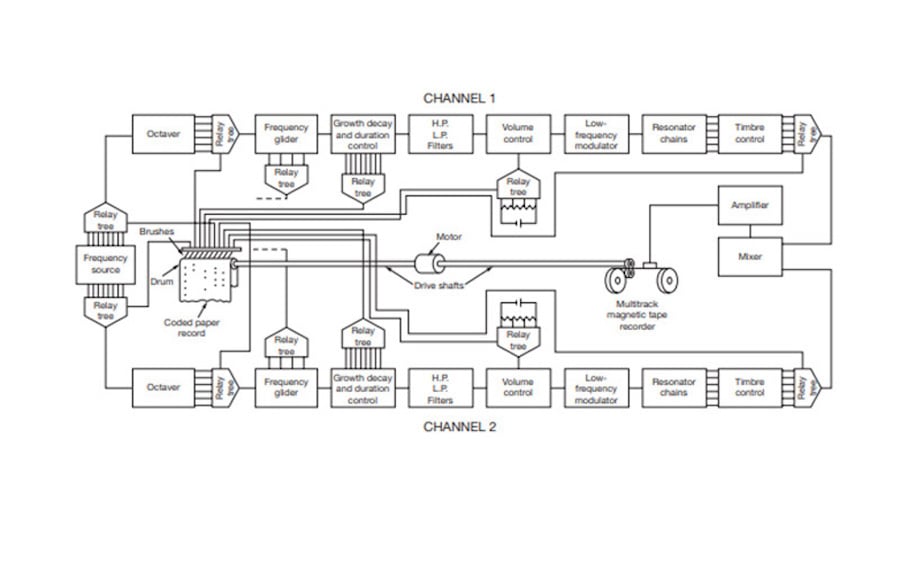

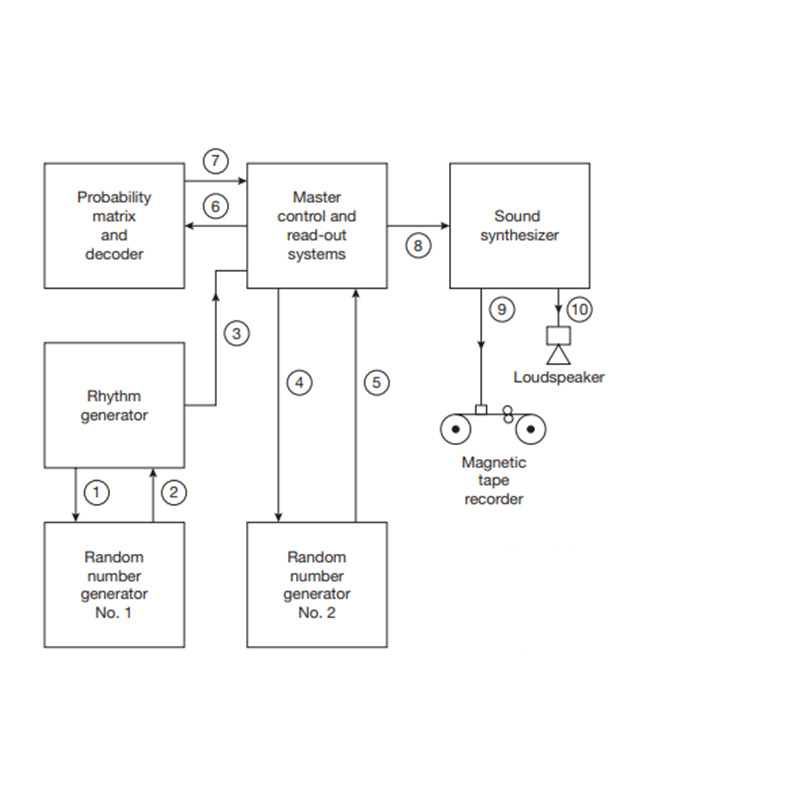

Diagram of the Electronic Music Composing Machine; image via Thom Holmes's Electronic and Experimental Music: Technology, Music, and Culture.

Diagram of the Electronic Music Composing Machine; image via Thom Holmes's Electronic and Experimental Music: Technology, Music, and Culture.

The earlier iteration of a music composing computer, called the Olson-Belar "Electronic Music Composing Machine," differed from other computers at the time as it was solely made with the intention of composing music. Using the statistical analysis of Foster’s songs, it created compositions based on random operations that selected notes based on patterns of two or three notes the engineers programmed into the machine. They used the frequency of a given note, how notes were repeated, and the rhythms in the songs in order to generate new songs in a similar style. The songs were all transposed to D major to reduce the chance operations' complexity.

The probability of songs was correlated to the number of mechanical relays within the machine, 16, and a rotary stepper switch with 50 positions selected for each of the two or three note sequences generated by the two random number generators. The tones were generated by vibrating tuning forks connected to pickups in order to be recorded or heard over loudspeakers.

While generating new music based on random operations with an early computer sounds groundbreaking, as it was, it was extremely limited in its output, having only the capabilities of making songs based on the 11 songs they trained and programmed the computer with. It turned out to be a complicated proof of concept for later iterations of Oslon and Belar’s work. They took this groundwork and, in 1955, unveiled the first RCA Music Synthesizer, also known as the Mark I.

The RCA Mark I

In an interview in 1975, Oslon was quoted as saying "The idea was to develop a musical instrument with no limitations whatsoever." As you can imagine, such a thing was above the limitations of the technology, and the resulting machine had its own particular limitations, ones that some composers harnessed for their creative compositions.

However, the instrument without limitations came from Oslon’s principle of dynamical analogies. In his book Dynamical Analogies, written well before the RCA in 1943, Oslon says, "By means of analogies, the knowledge in electrical circuits may be applied to the solution of problems in mechanical and acoustical systems. In this procedure the mechanical or acoustical vibrating system is converted in the analogous electronic circuits." The RCA MkI offered the first concrete application of these concepts: its circuits solved these sets of acoustic and mechanical problems.

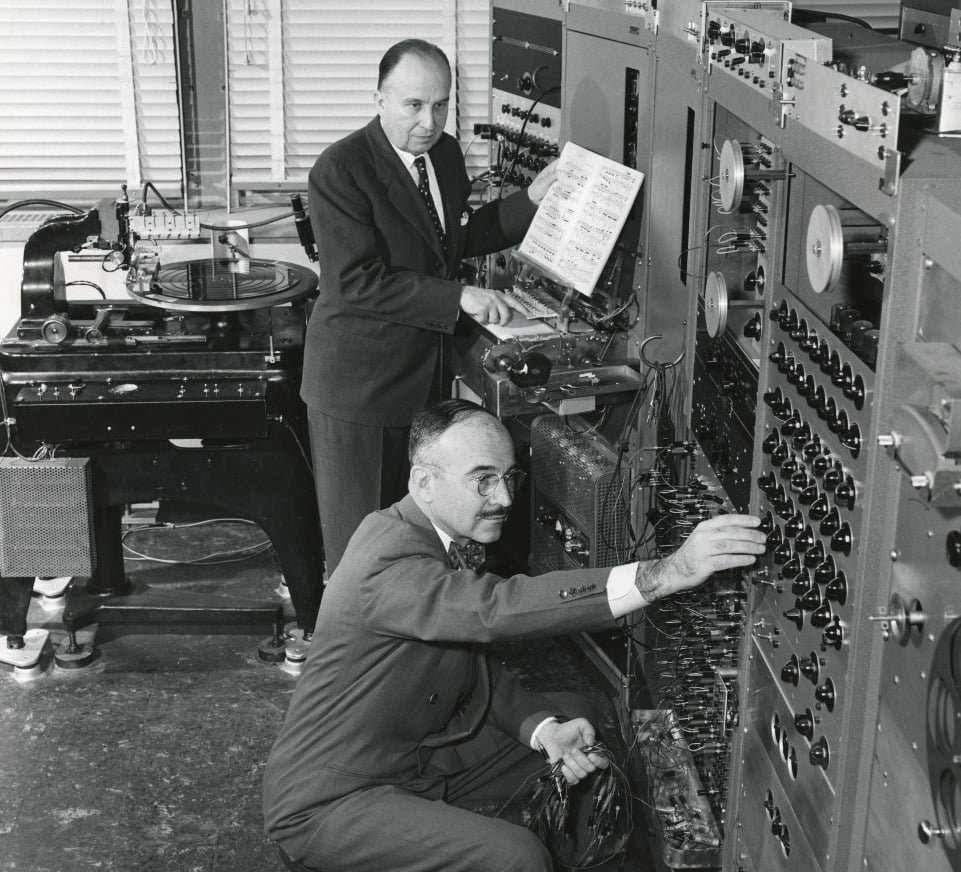

[Above: Harry Olson and Herbert Belar at the RCA MkI; image via Bob Moog Foundation.]

Even before the MkI was completed, its prototypes held the possibilities to further study the realms of both sound and our perceptions of it. In its final forms, it raised questions of new paradigms of composition and the link between human and machine. The foundations were laid many years before with Oslon’s mentor Carl Seashore’s experiments into human’s perceptions of sound and music. By his estimation, sound could broadly be described as having three components: pitch, amplitude, and timbre. Although some add a fourth component, duration, this aspect of a sound could fall under the purview of amplitude over time.

Using vacuum tube resonators, Seashore measured people's so-called "musical intelligence" as it related to the psychology of hearing, music, and speech. Some of his experiments in circuit designs functionally made their way to the RCA synthesizers that came decades after his experiments. His experiments, as many scientific experiments at the time, were also focused on the lines of race and the perceived superiority of the white race in terms of appreciation and talent in the realms of music. However, this significantly biased (and downright racist) research laid the groundwork for future technology—and ultimately, a studio that welcomed a wide range of international composers.

When completed by the RCA corporation in 1955, the MkI was said to be "ranked second only to the Typhoon missile simulator as the largest asssembly to emerge from the David Sarnoff Research Center." The Typhoon was made by a contract with the US Navy, and produced simulations of missle flight paths. While it of course was not used for military purposes, the significant takeaway here is that the RCA MkI rivaled the scope of projects used in Cold War millitary applications.

The MK I was not based on random operations, but instead allowed composers greater freedom to specify parameters such as pitch, envelope, amplitude, timbre, and more. Composing was a matter of punching holes in specialized paper that was then fed into a reader that transmitted that data to the machine. The main synthesis happened not on electronic oscillators but a bank of tuning forks electronically vibrated in order to generate pure sine waves at a pitch specified by the performer, a direct lineage from the Holson-Belar Music Composition Machine.

One focus for Olson and Belar was to eliminate the inherent flaws and noise introduced by imperfect human performers. The problems of human accuracy in terms of pitch and technique were replaced by punch card precision. When unveiled in 1955, journalists both praised its groundbreak technology and speculated (rightfully so) on the future of man-machine relationships. While RCA claimed it could synthesize human speech accurately, it fell short of this lofty goal.

The RCA MkII

In 1957, the MkI was replaced by the MKII, which vastly improved the synthesis capabilities of the earlier iterations. The MkI found a new home in the Smithsonian Museum, although it is no longer on display. Olson and Belar sought to move the MkII into a university setting, and ultimately ended up settling on Columbia/Princeton. By the time that the MkII was completed, RCA had realized that it was not a machine for pop hits, but rather an experimental instrument for new kinds of compositions.

The Columbia/Princeton Computer Music Center was founded by composers Vladimir Ussachevsky, Milton Babbitt, and Otto Luening, with a bit of help from a Rockefeller Foundation grant of $175,000 (roughly 1.8 million dollars today). In the grant application, Ussachevsky wrote that an electronic music studio could help both cultural progress domestically and the international exchange of ideas. They thought that electronic musical composition could help branch the divide between Western and Eastern ideals. Not only that, but hold up the technology supremacy of the United States on a world stage.

The CPEMC founders saw this synthesizer as conversely both a way to reshape the ontology of music and to reenforce the long tradition of musical forms, all through the means of electronic compositions. This was the first university-based electronic music studio in North America. This shift from private studios to the halls of academia soon caught on, with many other educational institutions creating their own electronic music departments.

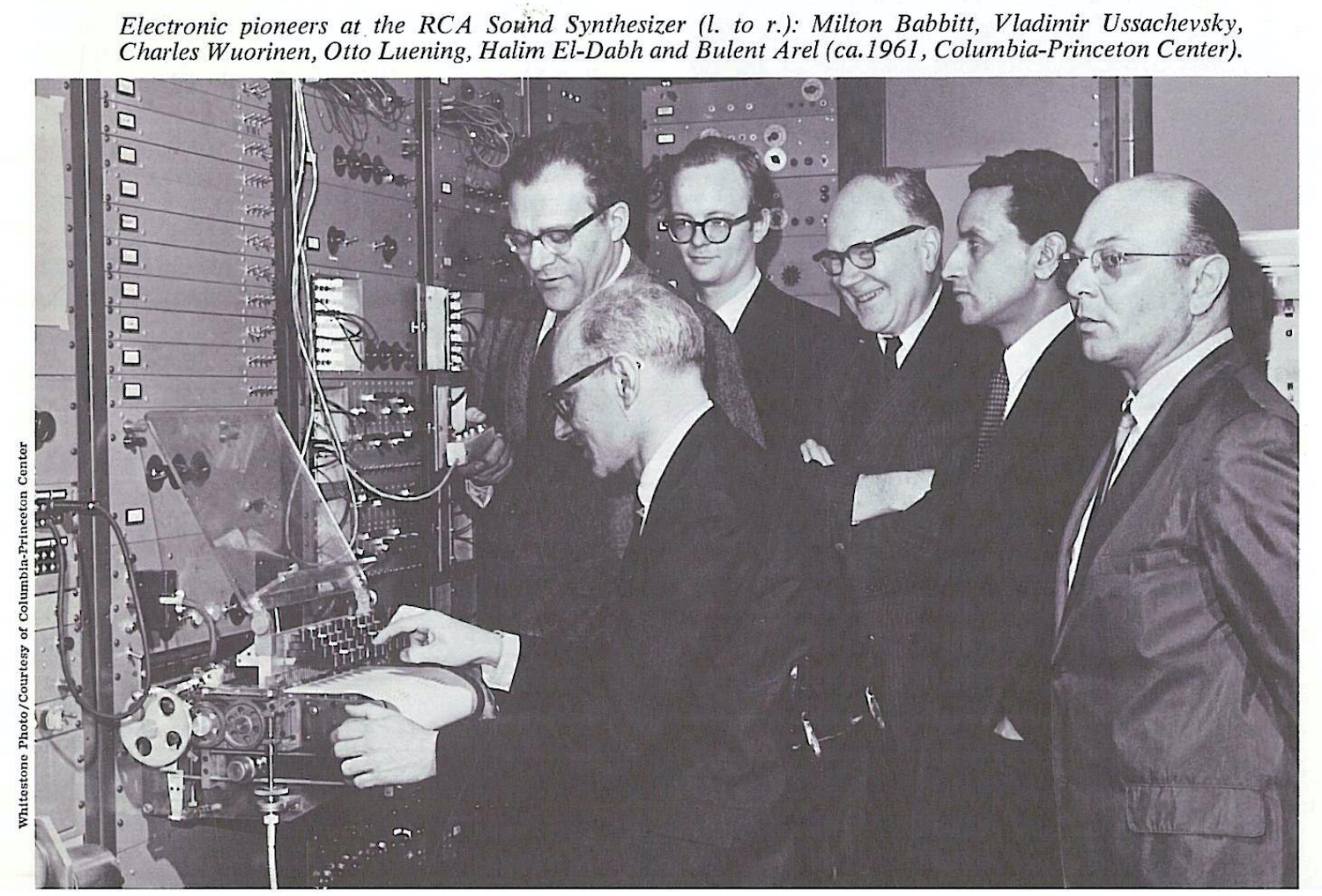

[Above: Milton Babbitt, Vladimir Ussachevsky, Charles Wuorinen, Otto Luening, Halim El-Dabh, and Bulent Arel at the RCA MkII; image via Summer 1970 issue of BMI's The Many Worlds of Music.]

The biggest addition on the MKII was the electronic oscillators, which produced not only sine waves, but sawtooth and triangle waveforms, with the addition of a noise generator for even greater harmonic complexity. The ten-octave tuning range meant the oscillators could produce audio across roughly the range of human hearing. A frequency shifter took a basic sine tone and used multiplication and division of the frequencies in order to generate the even and odd harmonics in a sawtooth wave.

High and lowpass filters provided tonal shaping and a timbre modifier that provided basic control over the components of the overtones of a sound. Another large addition was portamento, or a smooth sliding between notes to provide a new way of articulating melodies. The envelopes controlled the "growth" (or attack as we now know it), duration, and decay of a sound, with growth times between 1ms and 2 seconds, and decay times between 4ms to 19 seconds. Resonators and timbre controls at the end of the signal chain provided dynamic controls over the final sound before being monitored or recorded.

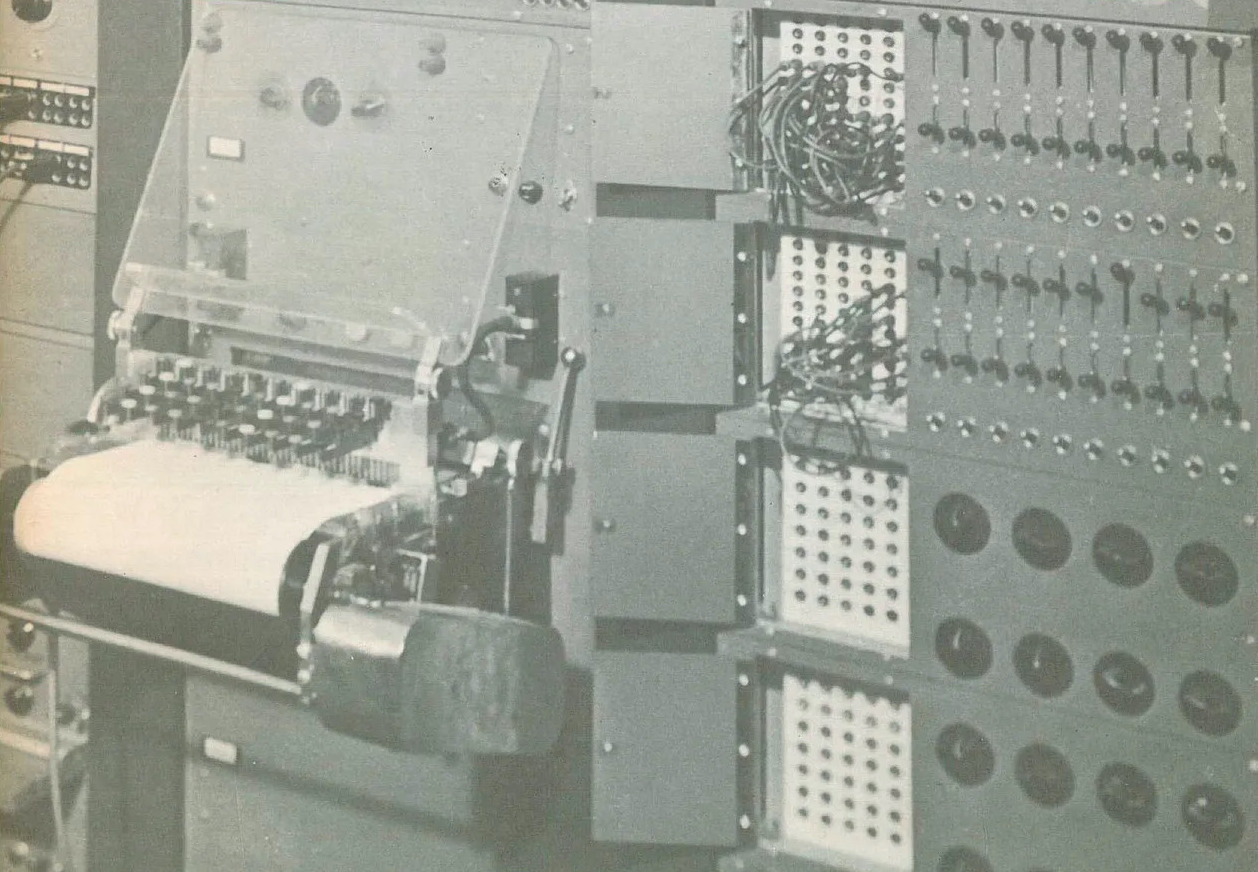

Composers interacted with the machine through a punched-paper tape input, controlled by a Teletype-like keyboard that cut holes in a 15”/38cm-wide roll of paper. The paper roll contained 36 columns, 18 for each of the two channels. The composer controlled the two channels with binary data, specifying frequency, octave, volume, envelope, and timbre. This was repeated over and over, one row at a time—a fairly time-consuming process. The result was much like that of a player piano, a row of holes that specified a composition.

[Above: the RCA MkII's paper punch terminal; image from Summer 1970 issue of BMI's The Many Worlds of Music.]

The punched-out compositions were then fed into the machine through a specialized reader with a series of metal brushes for every possible position of a punched hole in the paper. When the brushes passed over a hole, it made contact with the relay tree, completing a circuit and sending a signal to hardwired switches that then controlled the synthesized sound. While the machine could run up to 10cm/second at its highest speed setting, providing a composition of 240 beats per minute. However, the machine could only produce up to a four-minute composition at its slowest setting, requiring composers to split up their compositions over several rolls of smaller sequences for longer compositions.

Many composers did not rely on a single piece of punched paper for their compositions, but used them to create several layered sections of pieces that could then be modified using traditional tape music techniques and mixing methods. A microphone in the studio allowed composers to eschew the internal oscillators for sounds from the physical world. In the early 1960s, the punched paper tape method was updated with an optical reader that registered ink dots instead of the holes in the paper.

Originally, the RCA MKI and MKII did not contain a tape recording mechanism, but rather a disc-cutting lathe for recording the output of the machine to record rather than tape. Milton Babbitt eventually had RCA replace this cumbersome recording method with the much more versatile multitrack tape recording method. Each channel of this tape recorder could record seven individual sequences, and through overdubbing and careful synchronization, users could get up to 49 sequences of notes. This tape, loaded up with 49 sequences, could then be repeated with seven more channels, making up to 343 tracks of tone sequences from the MkII.

Milton Babbitt (pictured above) was one of the best-known composers who used the RCA MkII. Babbitt had already been experimenting extensively with serial compositions, and saw the RCA MkII as a way perfect way to further explore serial compositional techniques. Serialism, or serial music, is a form of music that often places emphasis not on a specific key but on the rhythms and timbres of using a set of notes with no emphasis placed on a single note. Serial music is most often made using the 12-tone technique, wherein all 12 notes of the chromatic scale are used equally and, therefore, not in one specific musical key. Some forms of serialism extend this technique not only to the pitches in the music but also to the rhythm, timbre, and dynamics of a piece of music. Serialism is often associated with atonal and post-tonal music. The RCA was limited to the 12-tone convention, which worked well for serialists—but not as much for those looking to compose music outside those bounds (like many electronic composers of the later 1960s and onward).

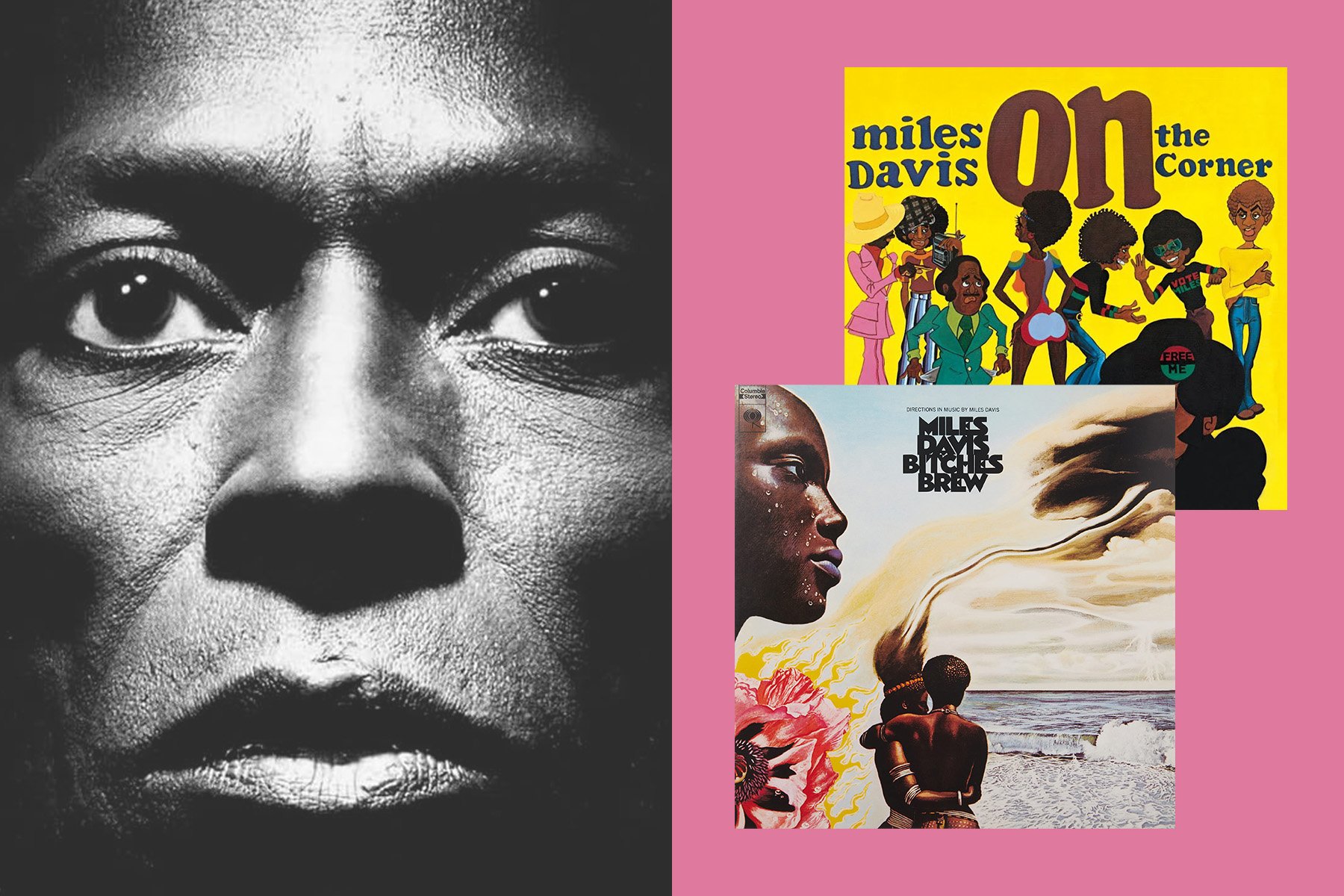

The punch card method of composing allowed Babbitt to serialize all aspects of his compositions, precisely specifying the pitch, timbre, pitch relationships, amplitude, dynamics, and rhythm of a piece. Between 1961 and 1964, Babbitt wrote and recorded Ensembles for Synthesizer, Philomel, and Composition for Synthesizer. Philomel (video embedded below) was written for soprano Bethany Beardslee, recorded soprano, and synthesized accompaniment. Wendy Carlos, the seminal electronic music composer known most for her work on Switched-On Bach, was a graduate student at Columbia during this time and helped run the tape machine for the premiere of Philomel in 1964.

Babbitt only used the audio-generating aspects of the MkII, preferring not to further process the audio after recording, including adding reverb. This starkly contrasts many other composers of the day, who chose to record sounds and then process them through tape splicing, adding reverb or delay, or adjusting tape speed. The abstracted serialized compositions fully embrace the technology of the MkII, relying solely on the machine as an instrument to realize Babbitt’s vision.

The MkII was notoriously unreliable—its paper reader and vacuum tubes were delicate and difficult to maintain. Babbitt said:

"It was not a comfortable device […] You never knew when you walked in that studio whether you were going to get a minute of music, no music, two seconds of music. You just didn’t know what you were going to get. You never knew what was going to go wrong. You never knew what was going to blow."

In 1968–69, composer Charles Wuorinen wrote the piece Time’s Encomium (video embedded above), the first piece of completely electronic music to win a Pulitzer prize. Wuorinen much like Babbitt wanted to stress the pure tones of the machine for the first 15 minutes of the 30-minute piece. The second half involved taking the first half and reprocessing it through the tape and sound processing capabilities of the studio. He wanted tight control over all aspects of the piece, the note-to-note relationships and the relationship between pitch and time.

The Fate of the RCA MkII

Babbitt was one of the last composers to favor the RCA MkII, using it in his work as late as 1972. Alice Shields, in 1963 as an assistant to Ussachevsky said of the RCA:

"No one, to my knowledge, composed a piece on the RCA […] but Milton Babbitt and Charles Wuorinen. I had little interest in it, as the timbres were very limited, and the key-punch mechanism was so inferior to music notation for live instruments whose timbres were not at all limited. My interest was, and largely still is, in 'concrete' or sampled sounds as sources for electronic manipulation and transformation."

[Above: the RCA MkII in the present day; images courtesy of Naomi Mitchell.]

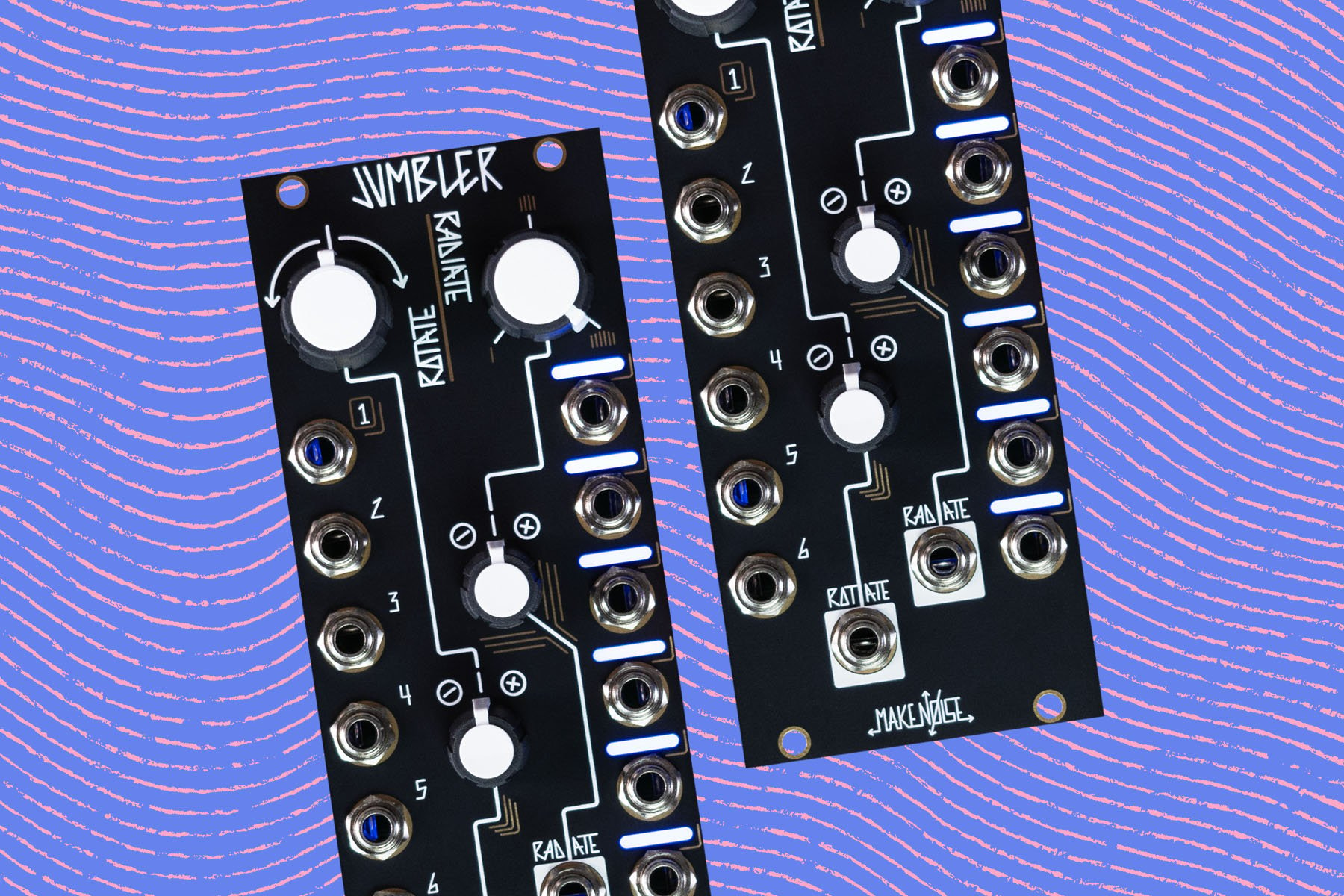

By the mid-1960s, modular synthesizers made with newly-available transistors began to take off in popularity. They were cheaper, more reliable, easier to program, more flexible in sound generation, far more immediate, and to a certain extent, portable. By 1965, the MkII gained a companion in the form of a Buchla 100 Series modular system in order to extend the compositional possibilities available in the CPEMC. The Buchla 100 Series system was recently restored by a team of Columbia faculty and students, and iis still in use at the Columbia Computer Music Center—although the MkII has fallen into disuse, its room now an office.

While groundbreaking at the time of its construction, the RCA MkII was destined to fall out of use in roughly a decade. The hulking mass of metal and vacuum tubes, hidden away in a university setting made it only available to a select few composers. Among those that did have access, only a few used it to its full potential—if anyone truly did at all.

It still exists, although now has fallen into disuse, providing a curiosity at the back of an office. Though restoration would be a complex task, it would certainly be possible—but for now, we can look at it as a stepping stone for a greater understanding of synthesis, a relic of the early age of computing and electronic music.